AI-Papers-of-the-Week tracks the ML paper firehose

DAIR.AI’s weekly GitHub repo curates top AI papers, with 12,317 stars and a paper list that stretches across 2025 and 2026.

Machine learning research does not slow down for anyone. The AI Papers of the Week repo from DAIR.AI has pulled in 12,317 stars and 775 forks by doing one simple thing well: collecting the most talked-about AI papers every week.

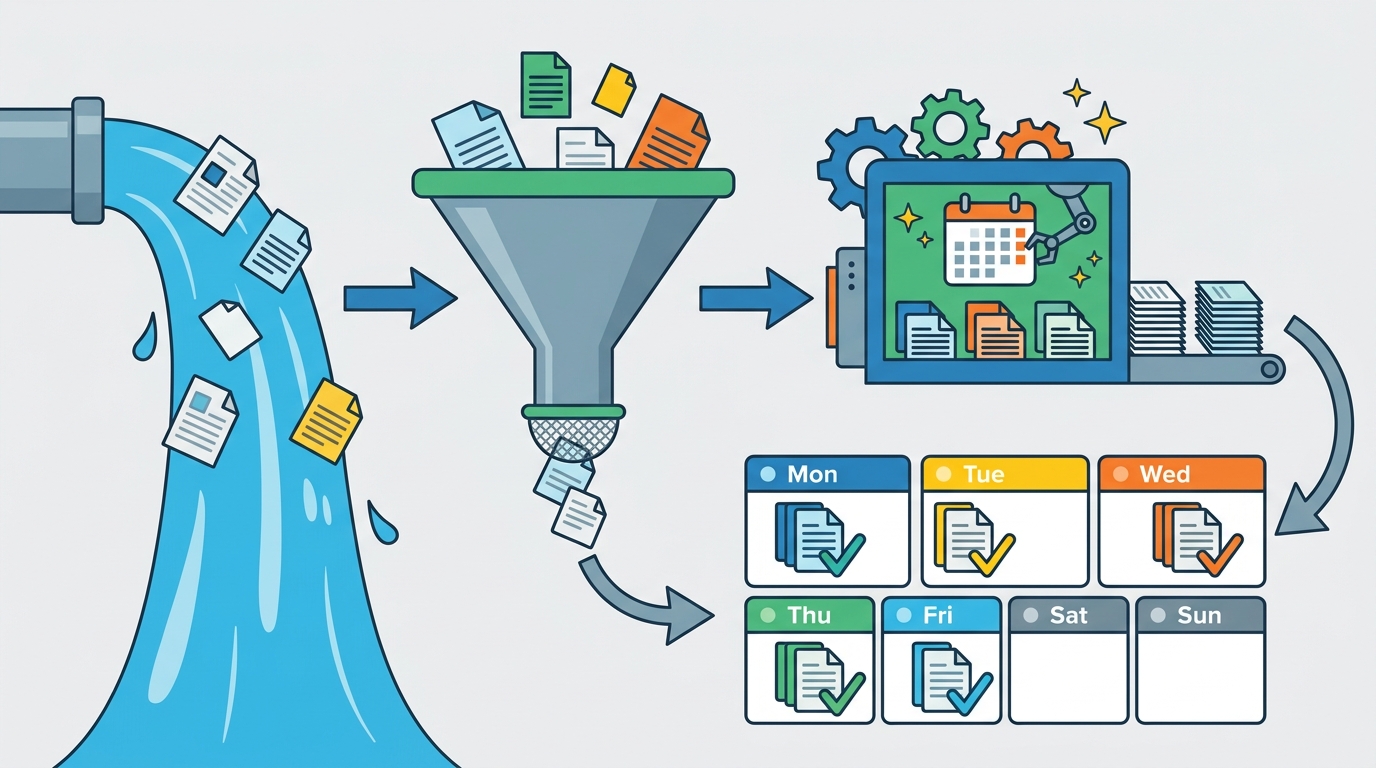

That matters because the research stream is too wide for most engineers to follow in real time. If you work in applied ML, agent tooling, or product research, a curated weekly list can save hours and help you spot what is actually moving the field.

The repo also points readers to a weekly newsletter via NLP News, which gives the project a second life outside GitHub. It is part archive, part reading list, and part signal filter for people who want to keep up without living on arXiv.

What the repo actually gives you

The structure is plain, which is a big part of the appeal. The README links out to weekly posts grouped by year, with entries for 2025 and 2026 already filling out the archive. That means you can jump from a current week to older picks without hunting through social feeds or scattered bookmarks.

For researchers and engineers, the value is less about novelty and more about consistency. A weekly cadence creates a habit: read the shortlist, skim the abstracts, and decide what deserves a deeper look.

This is the kind of resource that fits into a real workflow. You can use it to prep a team discussion, build a reading queue, or track how a subfield changes over time. The repo’s topic tags also make that easier by signaling coverage across AI, data science, deep learning, machine learning, and NLP.

- GitHub stars: 12,317

- Forks: 775

- Weekly archive spans 2025 and 2026

- Newsletter signup included in the README

- Topics listed: ai, data-science, deeplearning, machine-learning, nlp

Why weekly curation still matters

There is a reason this format keeps working. arXiv may be the firehose, but a firehose is not a reading strategy. Weekly curation compresses a noisy stream into something a human can actually scan before lunch.

DAIR.AI has built the repo around that idea. Instead of trying to rank every paper in the field, it highlights the papers that seem worth attention in a given week. That is a narrower job, and it is easier to trust when the curation is repeated over many months.

Timnit Gebru, one of DAIR.AI’s co-founders, has been clear about the group’s broader mission. In a The Verge interview, she said: “We want to make AI more accessible, more inclusive, and more accountable.” That line fits this project well, because access is the first barrier for most readers.

“We want to make AI more accessible, more inclusive, and more accountable.” — Timnit Gebru

The quote matters here because the repo is not trying to impress you with complexity. It tries to lower the cost of keeping up. For a lot of teams, that is the difference between reading papers regularly and never getting around to it.

How it compares with other paper trackers

There are plenty of ways to follow AI research, but they do not all solve the same problem. arXiv gives you breadth. Hacker News gives you discussion. A newsletter gives you convenience. This repo gives you a repeatable weekly shortlist with an archive you can browse later.

That tradeoff is useful. If you want raw volume, arXiv is still the source of record. If you want a fast scan of what people are reading, social feeds and newsletters can help. If you want a stable public archive with a GitHub trail, this project is much easier to return to.

Compared with many paper roundups, the repo’s biggest strength is transparency. The links are public, the weekly cadence is visible, and the archive lets you check whether a topic kept showing up or faded after one burst of hype.

- arXiv publishes the full research firehose; this repo filters it weekly

- NLP News pushes the roundup into email, while GitHub keeps the archive open

- GitHub adds stars, forks, and version history that newsletters do not have

- Hugging Face Papers focuses on discovery, while this repo focuses on weekly curation

That mix makes the project useful even if you do not read every entry. You can treat it like a watchlist for the field, then open the full paper only when a title, method, or benchmark really matters to your work.

What the numbers say about interest

The GitHub stats are the clearest proof that the idea has an audience. Twelve thousand stars is a lot for a content-heavy repo, and 775 forks suggests people are doing more than bookmarking it. They are copying, adapting, or building on the format.

The archive size matters too. A repo that keeps posting weekly over multiple years becomes more useful than a one-off list because it turns into a historical record. You can trace what the community cared about in early 2025, then compare that with the topics surfacing in 2026.

That kind of continuity is rare in AI content. Plenty of roundups appear for a few weeks and then disappear. This one keeps going, which is probably why it has become a reference point for readers who want a low-friction way to stay current.

If you are building an internal research digest, this repo is also a decent model. The lesson is simple: pick a cadence, keep the archive public, and make the entry point easy to scan. The hard part is not the format. It is the discipline to keep publishing.

Bottom line for engineers and researchers

AI Papers of the Week is useful because it solves a boring but real problem: too many papers, too little time. It does that with a clean weekly structure, a public archive, and a newsletter hook for readers who prefer email.

For teams working in NLP, deep learning, or applied AI, the repo is worth tracking if you want a steady pulse on what research is getting attention. The best way to use it is simple: skim weekly, save the papers that match your stack, and revisit the archive when a topic starts showing up twice.

My guess is that the next step for projects like this is tighter personalization, maybe by topic or task area. Until then, the practical question is whether your team has a reading habit at all. If not, this repo is an easy place to start.

Related Articles

Inside Claude Design: From Prompt to Slide Deck in One Conversation

Apr 19

OpenAI Codex额度缩水,Pro会员却更划算了

Apr 14

Qdrant vs Milvus vs Weaviate for RAG in 2026

Apr 14

Redis Vector Search: Quick Start Guide Explained

Apr 14

Awesome Open Source AI: the best projects list

Apr 12

agents-radar tracks AI signals from 10 sources

Apr 12