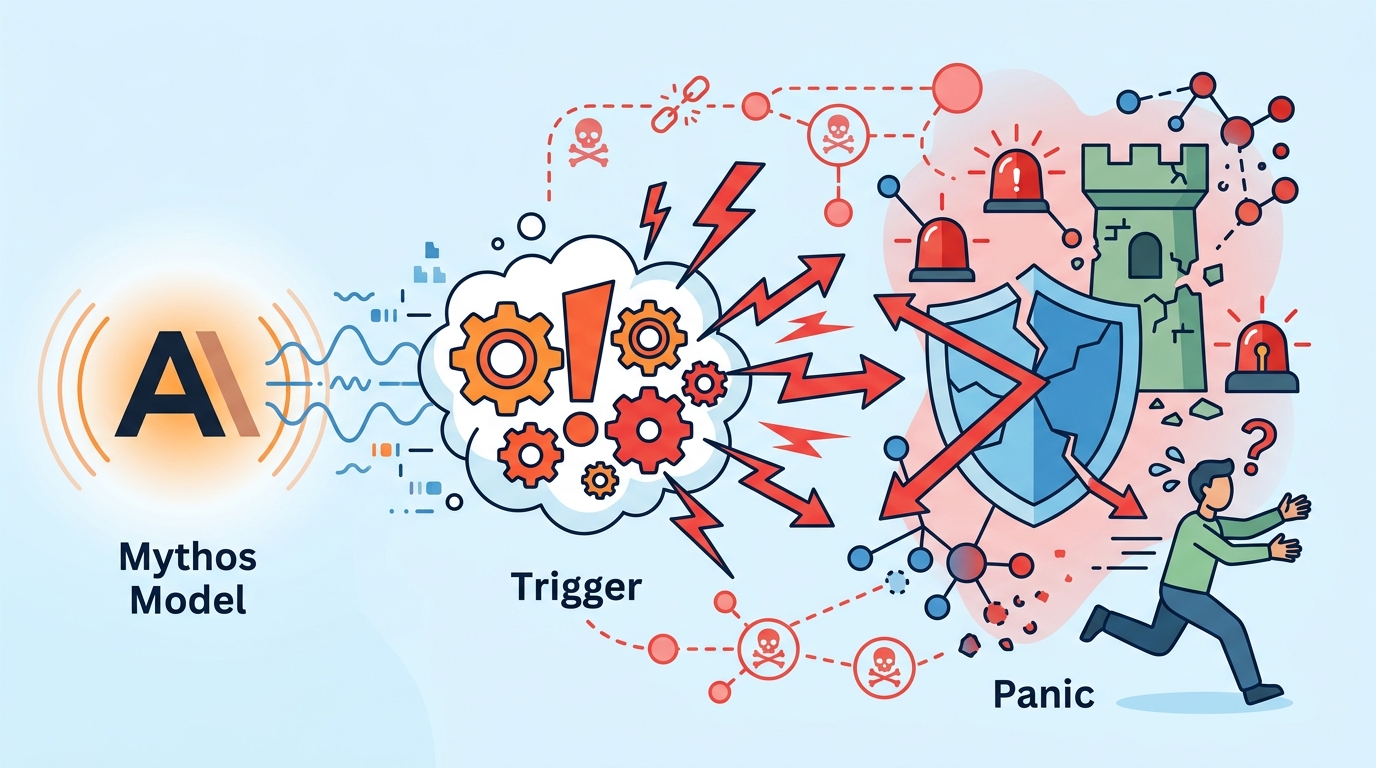

Anthropic’s Mythos Model Triggers Security Panic

Anthropic’s Mythos reportedly finds software flaws fast enough to worry governments, banks, and grid operators worldwide.

In just two weeks, Anthropic’s Anthropic model called Mythos sent security teams into overdrive. The reason is simple: Anthropic says the model can spot and exploit hidden flaws in software that runs banks, power grids, and government systems.

That is a very different kind of A.I. story. Most model launches are about better chat, faster coding, or cheaper inference. Mythos is getting attention because it appears useful for offense and defense at the same time, which is exactly why policy makers are treating it like a national security issue.

Why Mythos got everyone’s attention

The headline number is not a benchmark score or a token count. It is the speed of the reaction. Within two weeks of the model becoming known, governments and large organizations were already scrambling to assess exposure, update controls, and ask whether their own systems could be probed the same way.

Anthropic’s claim matters because modern infrastructure depends on software stacks with huge attack surfaces. A model that can search for weak points faster than a human team changes the economics of security work. It also changes the economics of offense, since one model can be pointed at many targets with minimal extra effort.

That is why Mythos is being discussed alongside the broader push from OpenAI, Google, and Microsoft into more capable agentic systems. The difference here is the explicit security risk. If a model can identify hidden flaws in critical software, the debate stops being abstract very quickly.

- Timeline: reaction escalated within 2 weeks

- Target systems: banks, power grids, government software

- Risk profile: dual-use offensive and defensive security work

- Implication: faster vulnerability discovery at machine speed

The security problem is bigger than one model

Mythos is getting the spotlight, but the underlying issue is broader. As models improve at code understanding, they also improve at finding bugs, chaining misconfigurations, and testing assumptions that human reviewers miss. That is helpful when you are defending a system. It is alarming when the same model is available to attackers.

The practical concern is not science fiction. It is scale. A traditional red team can inspect a limited number of systems in a day. A capable model can scan far more code, generate more test cases, and adapt faster when it finds a dead end. That gives attackers a better starting point and defenders a new tool they must control carefully.

For readers following the AI security beat, this is the same pressure that has shaped work from Anthropic’s research team and the broader safety community. The question is no longer whether A.I. can help find bugs. It can. The question is how much access a model should get before it becomes a liability.

“We need to be very thoughtful about how these systems are deployed,” said Dario Amodei, Anthropic’s chief executive, in a 2023 interview with The New York Times.

That quote lands differently now. A model that can hunt for hidden flaws is useful in a lab and unnerving in the wild. The same capability that helps a defender harden a payment system can help an attacker find a path into it.

What this means for banks, grids, and governments

The institutions most exposed to Mythos are the ones that run on old software, layered vendor tools, and long approval chains. That describes a lot of the world’s critical infrastructure. Banks still depend on legacy systems that were never designed for machine-scale probing. Power operators often run a mix of modern interfaces and older control software. Government networks have the added problem of fragmentation.

To make the risk concrete, compare the likely impact across sectors:

- Banks: faster discovery of weak authentication, exposed APIs, and misconfigured internal services

- Power grids: more pressure on operational technology environments where patching is slow

- Government systems: higher risk from chained vulnerabilities across contractors and agencies

- Security vendors: more demand for automated testing, code review, and anomaly detection

The response will probably be uneven. The best-funded organizations will buy more scanning tools, tighten access to internal code, and isolate sensitive systems. Smaller operators may rely on vendors that are also trying to catch up. That gap matters because attackers usually aim for the weakest link, not the strongest one.

There is also a policy angle. If Anthropic’s model can expose flaws in critical systems, regulators will want to know who gets access, what safeguards exist, and how misuse is detected. That conversation is already underway in Washington, Brussels, and other capitals where A.I. policy is moving closer to cyber policy.

How this changes the A.I. race

Mythos may become a test case for a new kind of model governance. The old debate was about whether a model could write better essays or code faster than a human. The new debate is about whether a model can find a weakness before the defender does. That is a much sharper line, and it will shape procurement, audits, and export controls.

The companies building frontier models will also have to answer a harder question: how do you release systems that are useful for security research without making mass exploitation easier? There is no clean answer. Access controls help. Monitoring helps. Rate limits help. None of those fixes eliminate the risk.

My read is that Mythos will push more organizations toward private model deployments, tighter sandboxing, and mandatory AI security reviews for critical codebases. The next big question is whether governments treat these models like powerful software tools or like dual-use cyber capability. That answer will decide how fast Mythos-style systems spread beyond a small set of trusted users.

For now, the takeaway is blunt: if your organization runs software that matters, you should assume models like Mythos will be used against it. The smart move is to test your own systems before someone else does.

If you want more coverage of frontier model risk, see our related piece on AI agents and security risks.