What DevOps Really Means on AWS

AWS defines DevOps as culture plus automation. Here’s how CI/CD, microservices, and IaC change delivery speed and reliability.

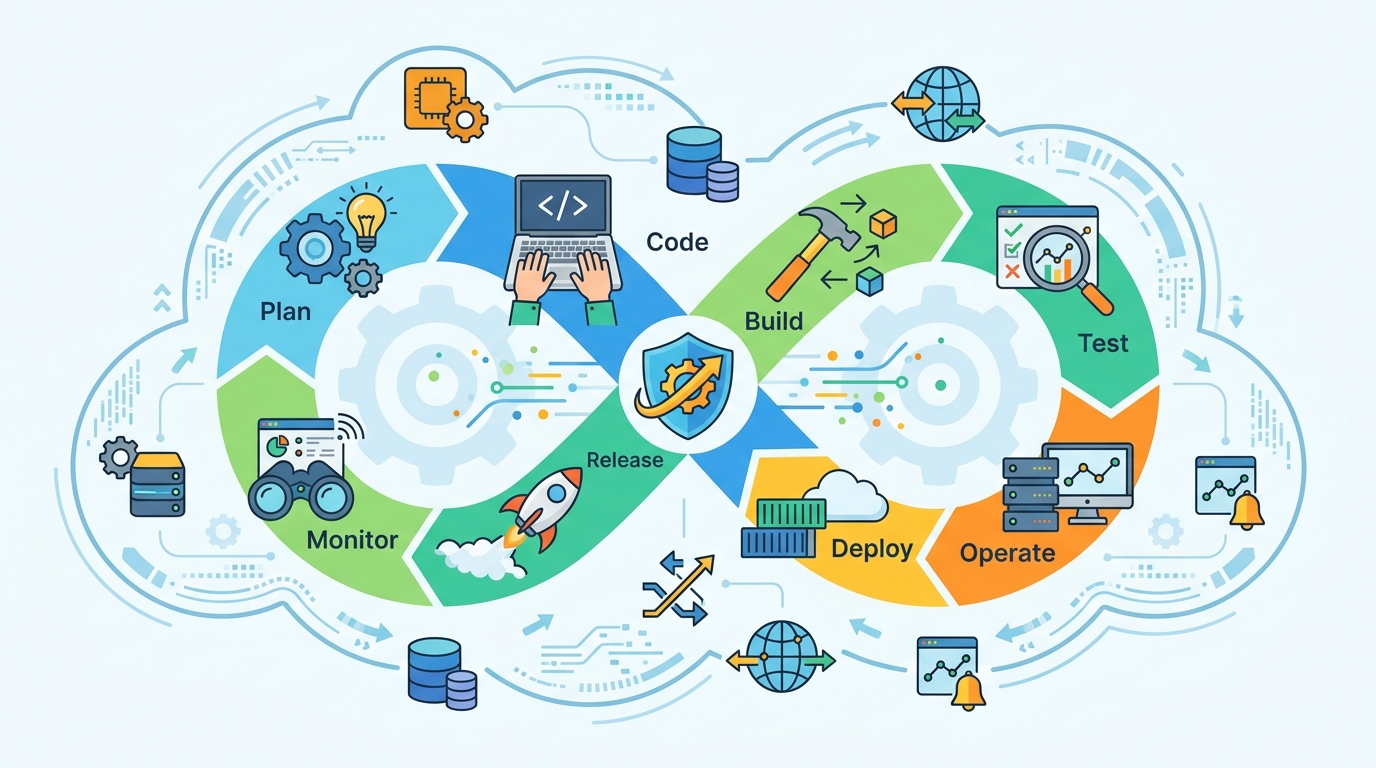

AWS says DevOps is the mix of cultural philosophies, practices, and tools that helps teams ship software faster. That definition sounds simple, but the numbers behind it matter: the model is built around frequent small changes, automated testing, and infrastructure that can be recreated in code.

In practice, DevOps is less about a job title and more about how teams work. Development, operations, security, and quality checks move closer together so software can go from idea to production with fewer handoffs and less manual work.

What AWS means by DevOps

On the AWS DevOps overview, the core idea is clear: remove the wall between development and operations, then automate as much of the delivery process as possible. That gives teams more control over release speed without forcing them to accept chaos as the price of speed.

The AWS version of DevOps is also practical. It is tied to tooling such as AWS CodePipeline, AWS CloudFormation, and Amazon ECS, which let teams automate delivery, describe infrastructure in code, and run services in repeatable ways.

The big shift is organizational as much as technical. AWS describes teams that share ownership across the full lifecycle, from development and test through deployment and operations. That means fewer ticket queues, fewer “someone else owns that” moments, and faster feedback when something breaks.

- Development and operations stop working in isolated silos.

- Release steps move from manual tasks to automated pipelines.

- Infrastructure becomes code that can be versioned, tested, and copied.

- Monitoring and logging become part of delivery, not an afterthought.

Why the model changes delivery speed

The strongest argument for DevOps is speed with control. AWS ties the model to high-velocity delivery, but the real trick is that the changes are usually small. Smaller updates are easier to test, easier to roll back, and easier to trace when a bug slips through.

That matters because traditional release cycles often bundle too many changes together. When a release fails, teams spend time untangling which code change caused the problem. With DevOps practices in place, teams can narrow the blast radius and recover faster.

Microservices make this even more important. Instead of one giant application, teams split systems into smaller services with clear responsibilities. A team can update one service without waiting for a full monolith release, which cuts coordination overhead and makes release frequency much higher.

- Continuous integration merges code frequently and runs automated builds and tests.

- Continuous delivery prepares code for release after it passes automated checks.

- Infrastructure as code turns servers and environments into versioned files.

- Configuration management keeps systems consistent across environments.

Security and reliability are part of the same system

DevOps gets misunderstood when people treat it like a speed-at-all-costs strategy. AWS pushes a different idea: speed only matters if teams can keep systems reliable and secure. That is why monitoring, logging, automated compliance, and policy checks sit inside the workflow.

Security teams can join earlier in the process through DevSecOps, which AWS describes as a model where security becomes part of the team’s shared responsibility. That shift matters because security issues are cheaper to fix before deployment than after incidents hit production.

One of the best known voices in modern software delivery is Jeff Barr, AWS’s Chief Evangelist, who has spent years explaining cloud operations to developers. In AWS keynote material, he often highlights the idea that cloud tools reduce the amount of undifferentiated heavy lifting teams have to do. That is the spirit of DevOps too: spend less time on repetitive plumbing, more time on product work.

"The cloud is about how you do computing, not where you do computing." — Jeff Barr

That quote matters here because DevOps depends on the same shift in thinking. If infrastructure becomes programmable and repeatable, then compliance, testing, and rollout policies can be encoded instead of handled by memory and spreadsheets.

AWS also points to policy as code as a way to track compliance at scale. That is especially useful in regulated environments where teams need to prove that configuration rules, access controls, and audit trails are being enforced consistently.

How AWS tools map to DevOps practices

AWS does a good job of connecting theory to concrete services. If you are trying to understand DevOps through an AWS lens, the mapping is pretty direct: pipelines handle delivery, CloudFormation handles infrastructure, and services like AWS CloudTrail record API activity for auditing and troubleshooting.

That toolchain matters because DevOps is not one product. It is a system of habits and automation layers that shorten feedback loops. The more of that loop you can codify, the less dependent you are on human memory or manual handoffs.

Here is the practical comparison AWS is hinting at:

- Manual provisioning means each environment can drift; CloudFormation keeps environments repeatable.

- Ad hoc deployment means release steps vary; CodePipeline makes the flow consistent.

- Untracked changes make audits painful; CloudTrail records API calls for later review.

- Monolithic release schedules slow fixes; microservices let teams ship smaller pieces more often.

If you want to go deeper on automation-heavy delivery models, OraCore’s guide to CI/CD pipelines pairs well with AWS’s explanation here. The overlap is obvious: DevOps is the operating model, and CI/CD is one of the main ways teams make it real.

The bigger lesson is that DevOps is not a single team structure. Some companies merge development and operations into one group. Others keep them separate but make ownership shared across the full application lifecycle. AWS leaves room for both, as long as the workflow is faster, safer, and less dependent on manual intervention.

So what should teams do next?

If you are adopting DevOps, start with one release path and make it boring. Automate build, test, and deployment steps first. Then add infrastructure as code, logging, and policy checks once the basics are stable. That sequence matters because teams usually fail when they try to change culture and tooling all at once.

The AWS framing is useful because it avoids ideology. DevOps is not about adopting a label; it is about shortening the distance between code and customer value while keeping systems observable and controlled. If your team still waits on handoffs, rebuilds environments by hand, or treats security as a final gate, you are leaving too much time on the table.

The most likely next step for most organizations is not full DevOps maturity overnight. It is one service, one pipeline, and one environment brought under automation. Once that works, the question becomes simple: which part of your delivery process still depends on a person clicking buttons that a script could already do?

Related Articles

Amazon Puts Another $5B Into Anthropic

Apr 21

Amazon’s $25B Anthropic Bet Is About Compute

Apr 21

Why Anthropic Is Walking Away from an $800 Billion Valuation

Apr 21

Amazon’s AI push is creating internal duplication

Apr 21

OpenAI’s two quiet bets: money and image

Apr 21

AI Weekly: 2026-04-13 ~ 2026-04-20

Apr 20