用了 Claude Code 半年,這五件事我希望一開始就知道

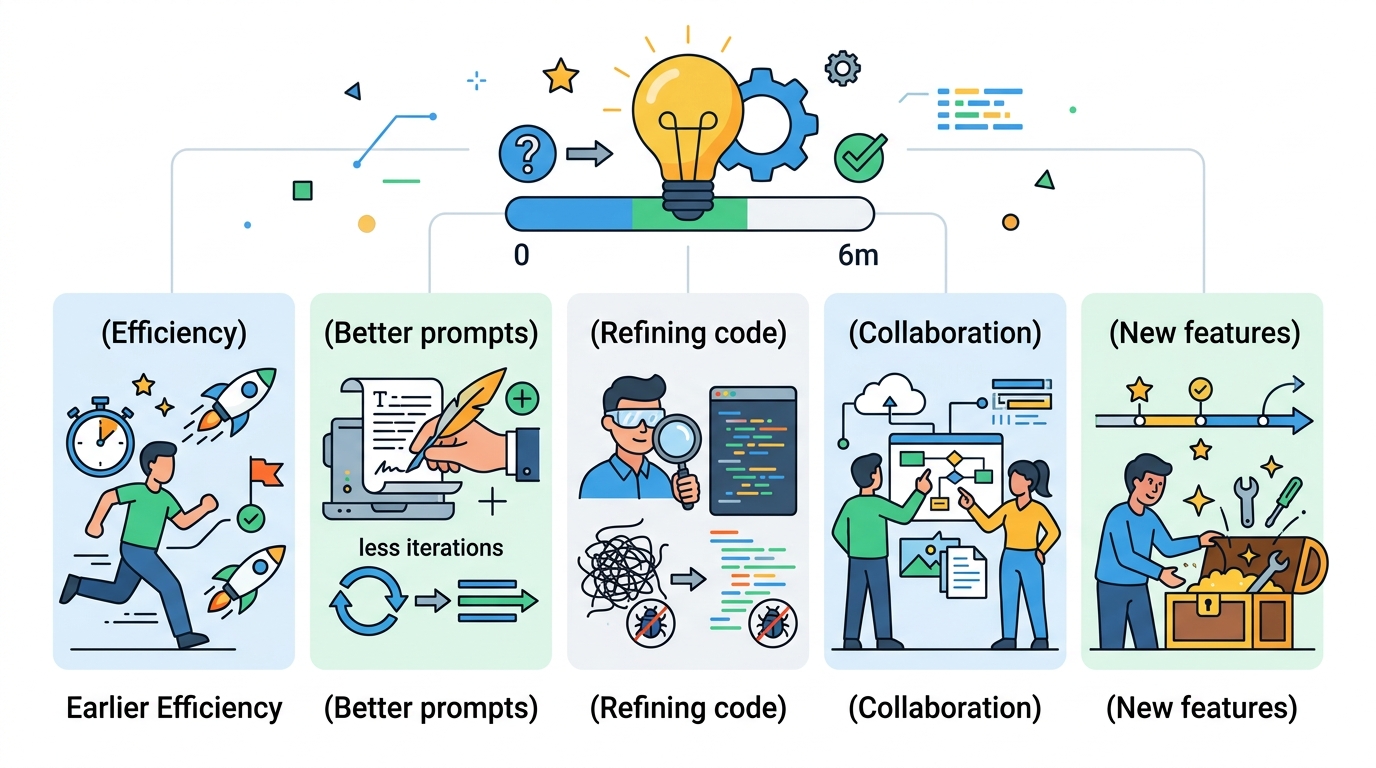

用 Claude Code 超過一週的人都會發現,它跟 ChatGPT 最大的差別不是能跑 terminal、也不是能搜網頁,而是它會記住東西並且隨使用成長。但多數人停在「跟它聊天」的階段。這篇整理半年經驗裡最有感的五件事:CLAUDE.md 的真正用法、Memory 該存什麼、Skill 組合的化學反應、Subagent 派工的三種常見錯誤,以及跨 CLI 協作(Claude Code / Codex / Gemini)的實作選擇。

Claude Code 上手一週之後,大部分人還在問它「一個問題、拿一個答案、關掉視窗」。這個用法跟 ChatGPT 差不多,看不出差異。

差別要從「讓它記住東西」開始。Claude Code 最被低估的能力不是跑 shell、也不是搜網頁,而是跨 session 的記憶機制:CLAUDE.md、MEMORY、Skill、Subagent、Cron、MCP server 這一整組工具,拼起來能把一個 AI 助手變成一個會學習的數位分身。

這篇整理用了半年後,最想回去告訴剛開始那個自己的五件事。不是功能清單,是「這些功能應該用在什麼情境」。

一、CLAUDE.md 不是人格設定,是 guardrail

訂閱 AI 趨勢週報

每週精選模型發布、工具應用與深度分析,直送信箱。不定期,不騷擾。

不會寄垃圾信,隨時可取消。

很多教學把 CLAUDE.md 當作「給 Agent 寫人格」的地方,寫「你要直接、有觀點、不要用客服用語」這種指示。這些寫了沒錯,但用錯重點。

CLAUDE.md 的真正用途是 guardrail,不是 personality。它是你每次對話都會被強制注入的上下文,最有效的用法是寫你反覆踩到的陷阱,而不是泛泛的性格描述。

例子,這種寫法沒用:

你要簡潔、有觀點、不要囉嗦。

Claude 讀了這段幾乎什麼行為都不會改,因為「簡潔」跟「囉嗦」它本來就有判斷。有用的寫法是:

- DELETE / UPDATE 執行前,必須先用相同 WHERE 條件跑 SELECT 確認影響範圍

- API Key 禁止硬編碼,要用環境變數

- 完成修改必須跑 linter 才能標記任務完成

這三條是可執行的檢查點。Claude 違反這些條件時你能指著條文說「你違反規則 X」,它會真的改。

實務建議:CLAUDE.md 保持在 1KB 以內,寫「事件驅動」的規則(當你遇到 X 就要 Y),不寫「狀態描述」的規則(你是 Y 類型助手)。前者是 guardrail,後者是裝飾。

二、Memory 不是檔案系統,是「頻率 × 持久度」的儲存

Claude Code 的 Memory 大致分三層:

- Session Memory:當前對話的上下文,關掉就沒了

- Persistent Memory:

MEMORY.md+USER.md跨 session 持久 - Skill Memory:從經驗提煉的可重用工作流

表面看起來像三種檔案,實際上是頻率 × 持久度的兩軸:存得越久、調用頻率越低,就該用越濃縮的形式。

踩過的坑:早期什麼都塞 MEMORY.md,包括「今天 deploy 了 v4.9.3」「某個客戶的 edge case 處理」這種臨時性事實。結果兩個月後 MEMORY.md 膨脹到 8KB,每次對話都被灌一堆無關歷史,品質反而下降。

該存的三種東西:

- 難重現但會重複遇到的陷阱(例:"Supabase RLS 在 service role 下會被繞過,所有 public routes 必須

status=eq.published明寫條件") - 專案的長期決策(例:"Stack A 使用 Nuxt 3,不遷 Next.js")

- 用戶明確的偏好(例:"commit 訊息用 conventional commits,不加 emoji")

不該存的三種東西:

- 短期狀態(今天 deploy 了什麼)

- 可以從 code / git 讀出來的事(專案結構、最近 commits)

- 單次專案的細節(「本次重構的檔案列表」)

這些短期資訊放 task list、git log 或 session memory 就夠。寫進 MEMORY.md 反而污染未來對話。

三、Skill 的價值在組合,不在單個 Skill 多強

很多人把 Skill 當「插件」使用,裝一個用一個。這樣看不出 Skill 的真正價值。

Skill 的化學反應出現在串接。舉個具體例子,做一個新聞網站的內容管線,可以串 5 個 Skill:

- keyword-research:輸入話題,產出 SEO 關鍵字與搜尋意圖

- content-brief:輸入關鍵字,產出文章大綱 + 原始資料來源

- draft-writer:輸入大綱,產出第一版 HTML 文稿

- humanizer:掃描 AI 寫作模式,產出修訂建議

- seo-optimizer:輸入稿件,檢查 meta、canonical、內鏈密度

每個 Skill 單用都普通,串起來能跑出一條零接觸的文章產製線。重點不在 Skill 強度,在於它們透過檔案系統傳資料——每步輸出寫到固定檔案,下一步直接讀,不依賴記憶體變數。

設計 Skill 的三個原則:

- 單一職責:一個 Skill 只做一件事,不要塞兩個功能

- 冪等:同樣輸入跑兩次結果不變

- 可觀察:每一步輸出落地為檔案,出錯時可以打開看

這三點看起來像 Unix 哲學,因為它就是 Unix 哲學。Skill 系統真正的設計靈感是 pipe,不是插件。

四、Subagent 派工的三種錯誤姿勢

Subagent 是「派生子任務」的機制,讓主 session 可以丟工作給獨立的子 Agent 並行跑。很多人第一次用會犯三個錯誤:

錯誤一:濫用並行

把所有任務都丟 subagent。實際上有順序依賴的任務並行反而更慢,因為要把每個 agent 的結果餵給下一個,還得重新拼 context。只有確定無依賴的任務才適合並行。

錯誤二:context 重複餵

派 subagent 時把整個對話歷史塞給它。Subagent 的價值就是乾淨 context,重新餵歷史違反設計。正確做法:給 subagent「目標 + 必要上下文」,讓它用空白腦袋做事。

錯誤三:沒設 exit criteria

Subagent 不知道什麼時候算完成。預設行為是把話講完、寫個總結就停,但它可能只做了 30% 的任務就「結案」了。正確做法:在派工指令裡寫清楚「必須驗證 X 通過、必須產出 Y 檔案、必須回報 Z 數據」,沒達到不能結案。

以 OraCore 的文章產製為例,我的派工模板是:

把這 8 篇 markdown 轉成 HTML 並插入 DB。驗收條件:

- 執行

SELECT count(*) FROM articles WHERE slug LIKE 'xyz-%'結果為 8- 每筆

rewrite_status = 'done'、status = 'published'- 4 組 zh/en 透過

related_article_id雙向連結。回報時附 SELECT 結果。

Subagent 知道自己要達成什麼才算完成,結果品質直接從「猜它做到哪」變成「驗證數字」。

五、跨 CLI 協作:tmux、MCP server、檔案 bus 的取捨

用到一定程度會想「能不能讓 Claude Code 當主控,派工給 Codex、Gemini、本地的 llama.cpp 等不同模型?」這個想法合理,因為每個模型有各自強項:Opus 強在架構判斷、Codex 強在重構、Gemini 強在長 context 摘要。

實作路徑有三種:

路徑一:tmux send-keys

用 tmux 開多個視窗分別跑不同 CLI,主控用 tmux send-keys 派指令,再 scrape terminal 讀回答。快速能動,但四個硬傷:沒 exit code、沒 structured response、terminal 字元污染、記憶靠人工。適合做 demo,不適合生產。

路徑二:MCP server

把每個 CLI 包成 MCP server 的 tool,主控呼叫 dispatch_to(model, task),回應以 JSON 傳回。結構化、有錯誤處理、context 可以用 MCP resource 共享。工作量比 tmux 大(要寫 MCP server),但一次投資長期受益。

路徑三:檔案匯流 bus

所有 agent 共用一個資料夾,透過檔案讀寫通訊。主控寫 /tasks/001.json,worker 輪詢資料夾找新任務、寫 /results/001.json。簡單、debuggable、任何語言都能當 worker。缺點是輪詢開銷、沒即時性。

我的結論:demo 用 tmux、生產用 MCP、研究型實驗用檔案 bus。不要想著一種解決所有情境。

結語:從工具到系統

Claude Code 最反直覺的地方是,它不是「一個工具」,是一個會成長的系統。用得越久,它越懂你。你踩過的坑變成它的 Skill,你的偏好寫進 Memory,你的專案規則刻在 CLAUDE.md。三個月後你會發現它已經不只是 AI 助手,更像是一個繼續替你工作的數位分身。

從「跟 AI 聊天」到「讓 AI 替你幹活」的距離,不是功能差距,是認知差距。先挑最痛的點切入:每天重複同樣指令的就寫 CLAUDE.md,多專案的就搞 project-level 規則,想讓 AI 自動做事的就上 Cron 加 Skill。

重要的是開始用,讓系統自己長大。