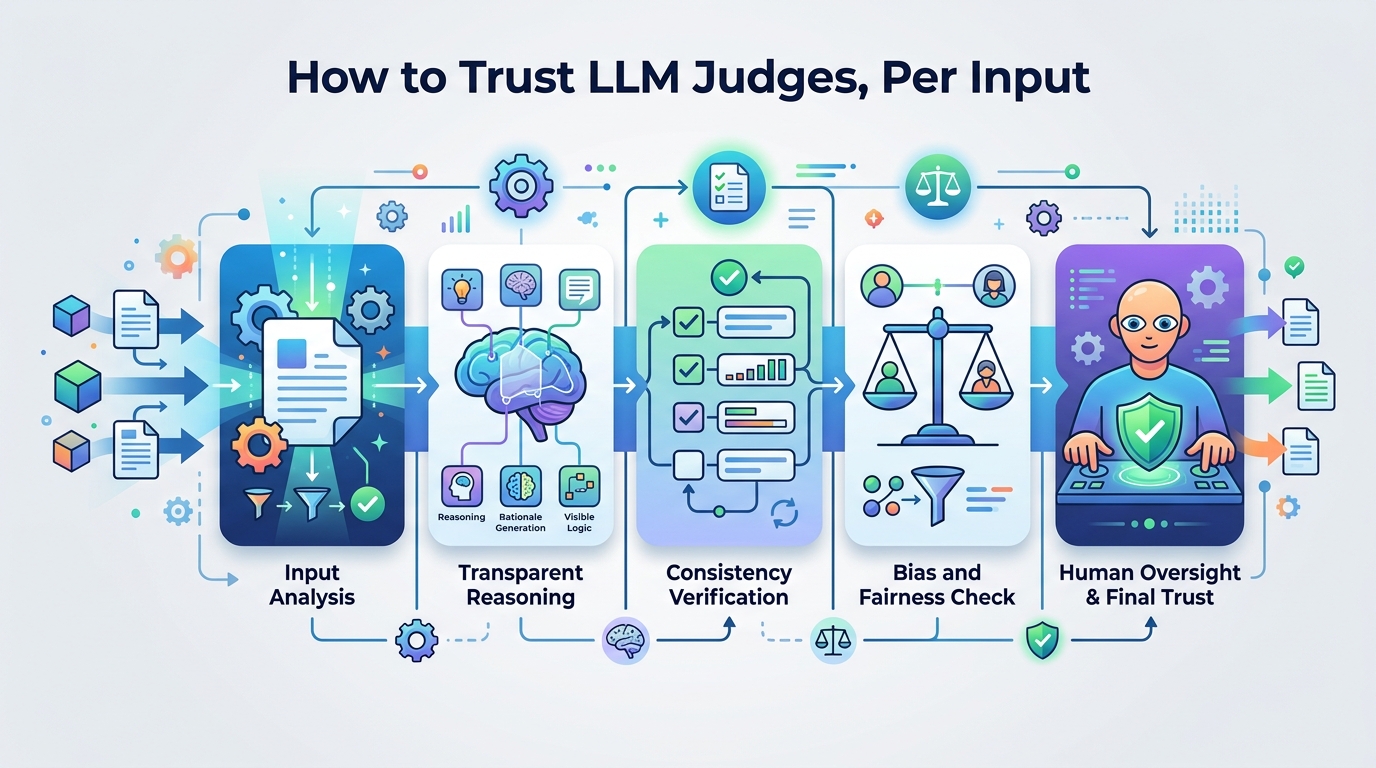

How to Trust LLM Judges, Per Input

A diagnostic toolkit shows LLM judges can look stable on average while still being unreliable on individual inputs.

LLM-as-judge systems are becoming a common way to evaluate generated text, but this paper argues that aggregate scores hide a more important question: how reliable is a judge on a specific document? Diagnosing LLM Judge Reliability: Conformal Prediction Sets and Transitivity Violations tackles that problem with two diagnostics: a transitivity check and conformal prediction sets.

The practical takeaway is straightforward. If you are using an LLM to score summaries, outputs, or other NLG systems, you may want more than a single rating. You may want to know when the judge is uncertain, when its comparisons contradict each other, and whether that uncertainty is tied to the input itself rather than random noise.

What problem this paper is trying to fix

LLM judges are attractive because they can automate evaluation that would otherwise require human review. But “works on average” is not the same as “trustworthy on each example.” A judge can produce low overall error rates and still behave inconsistently on particular documents, especially when the task is subtle.

This paper focuses on that gap. The authors say per-instance reliability is poorly understood, even as LLM-as-judge frameworks are increasingly used for automatic NLG evaluation. Their target is not just whether a judge can rank systems correctly in the aggregate, but whether it can be trusted to make consistent decisions on a single input.

They test this on SummEval, using four judges and four evaluation criteria. The paper is not trying to replace human evaluation outright. Instead, it aims to give developers a way to inspect when an automated judge is likely to be fragile.

How the method works in plain English

The first diagnostic is a transitivity analysis. In a well-behaved judging setup, if A is preferred over B and B is preferred over C, then A should usually be preferred over C. When that pattern breaks, you get a directed 3-cycle. The paper checks for these contradictions to see how often the judge’s preferences fail to line up.

The second diagnostic is split conformal prediction sets over 1-5 Likert scores. In plain terms, instead of outputting one score, the method outputs a set of plausible scores with a theoretical coverage guarantee of at least 1-α. If the set is narrow, the judge is more confident; if it is wide, the judge is less certain. The authors use set width as a per-instance reliability signal.

That matters because the width is not just a vague confidence estimate. The paper reports that prediction set width correlates with reliability, and that this signal behaves consistently across judges. In other words, the width appears to reflect document-level difficulty rather than quirks of a particular model.

The authors also compare this diagnostic across criteria. That lets them ask whether some aspects of evaluation are inherently easier for LLM judges than others, which is more useful than asking whether one judge is “better” in a vacuum.

What the paper actually shows

The transitivity results are the first warning sign. The paper reports low aggregate violation rates, with average ρ in the range of 0.8% to 4.1%, but those numbers hide a lot of input-level inconsistency. Between 33% and 67% of documents show at least one directed 3-cycle. So even when the overall violation rate looks small, a large share of documents still trigger contradictory judgments.

The conformal prediction results are the second major finding. Across all judges, prediction set width is positively associated with reliability, with rs = +0.576, N = 1,918, and p < 10-100. The paper also reports cross-judge agreement on this width signal, with average r in the 0.32 to 0.38 range, which supports the idea that the signal reflects the input rather than judge-specific randomness.

On the criterion side, the paper says criterion matters more than judge. Relevance is judged most reliably, with an average set size of about 3.0. Coherence is moderately reliable, with an average set size of about 3.9. Fluency and consistency are the weakest, with average set sizes around 4.9. Since the scores are on a 1-5 Likert scale, a larger set means the judge is leaving more of the scale in play.

One important constraint: the abstract does not provide broader benchmark comparisons, and it does not claim that these diagnostics solve evaluation quality end to end. What it does show is that two different lenses—transitivity and conformal set width—both point to the same conclusion: some evaluation tasks are much harder for LLM judges than others, and per-instance uncertainty is real.

Why developers should care

If you build or ship systems that rely on LLM judges, this paper suggests a more cautious evaluation workflow. A single score can be misleading, especially when the judge is being used as a proxy for human preference. The more practical pattern is to treat the judge as an instrument with observable uncertainty, not as an oracle.

That has a few concrete implications:

- Use per-example uncertainty instead of only aggregate averages.

- Watch for inconsistent pairwise preferences, not just final scores.

- Expect some criteria to be much harder than others.

- Prefer diagnostics that are stable across judges if you need a general reliability signal.

For teams evaluating summarization, ranking, or other NLG outputs, this can help decide when to trust automated scoring and when to fall back to human review. A wide conformal set is a useful warning sign: the judge may be operating in a region where the input is inherently ambiguous or the criterion is too subjective.

The paper is also useful as a design pattern. It shows how to turn an LLM judge into something more inspectable without changing the underlying model: add a consistency check, add a calibrated uncertainty estimate, and then look at where those signals disagree with the headline score.

Limits and open questions

The study is focused on SummEval, so the results are strongest for that setting. The abstract does not claim the same numbers will hold across other datasets, domains, or prompt styles. It also does not provide benchmark tables beyond the reported diagnostics, so there is no evidence here that one judge dominates another in a general-purpose sense.

Another limitation is that conformal prediction sets tell you when a judge is uncertain, but not why. A wide set could mean the document is genuinely difficult, the criterion is underspecified, or the judge is poorly calibrated for that task. The paper’s cross-judge agreement suggests the signal is meaningful, but it does not fully disentangle those causes.

Still, the core message is strong: if you use LLM judges, you should measure reliability at the document level, not just the dataset level. This paper gives developers two practical tools for doing that, and both point to the same conclusion—some evaluation decisions are much less stable than they look from the aggregate view.

The authors say they release code, prompts, and cached results, which should make the diagnostics easier to reproduce and adapt. For practitioners, that is probably the most useful part: not a new judge, but a way to tell when a judge is likely to be shaky.