MM-WebAgent Makes Webpage Generation More Coherent

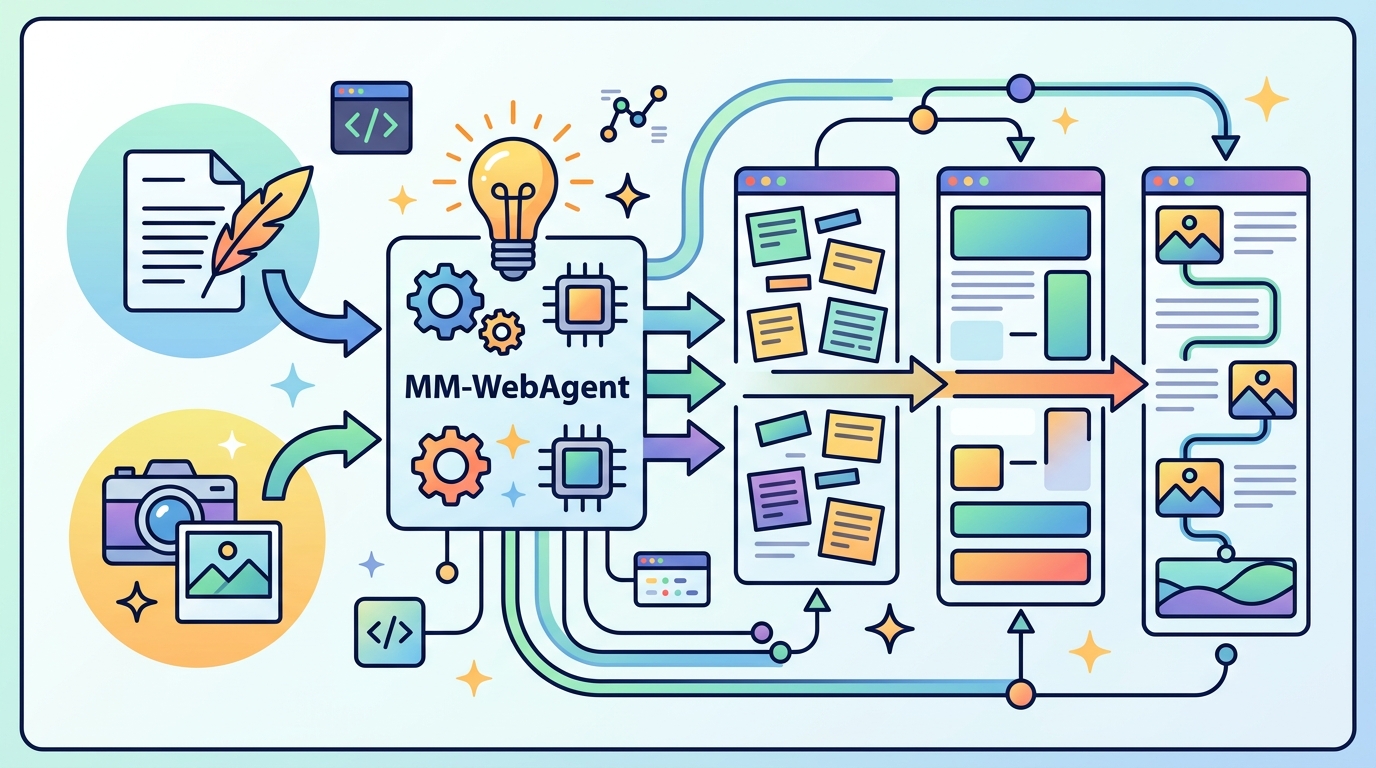

MM-WebAgent uses hierarchical planning and self-reflection to generate more coherent multimodal webpages from AIGC elements.

MM-WebAgent: A Hierarchical Multimodal Web Agent for Webpage Generation tackles a real pain point in AI-assisted UI work: when images, videos, and other visual assets are generated independently, the final page can look stitched together instead of designed. The paper argues that webpage generation needs more than isolated content creation; it needs coordination across layout, content, and integration.

That matters for engineers because modern web design workflows are increasingly multimodal. If your generation pipeline can create assets but cannot keep them visually aligned, you end up with style drift, weak composition, and a lot of manual cleanup. MM-WebAgent is built to reduce that gap by treating webpage generation as a hierarchical, agent-driven process instead of a one-shot generation task.

What problem this paper is trying to fix

The core issue is inconsistency. The abstract says that directly integrating AIGC tools into automated webpage generation often produces style inconsistency and poor global coherence because elements are generated in isolation. In practice, that means the header, hero image, body visuals, and supporting media may each look fine on their own but fail to work together as a page.

This is a familiar failure mode for multimodal systems. A model may be good at making an image or drafting a section of a page, but if it lacks a global plan, the result can feel disconnected. The paper positions MM-WebAgent as a way to coordinate those pieces so the output looks like a single webpage rather than a bundle of unrelated assets.

The authors also frame this as a limitation of both code-generation and agent-based baselines. The paper does not claim those approaches are useless; instead, it suggests they are not enough when the task requires both multimodal content creation and careful integration across the whole page.

How the method works in plain English

MM-WebAgent is described as a hierarchical agentic framework for multimodal webpage generation. In plain English, that means it does not try to generate everything in one flat step. Instead, it plans at multiple levels and uses iterative self-reflection to improve the result as it goes.

The abstract says the system coordinates AIGC-based element generation through hierarchical planning and iterative self-reflection. It jointly optimizes three things: global layout, local multimodal content, and their integration. That is the key design idea. The page structure is not treated separately from the generated visual elements, and the generated elements are not treated separately from the layout they need to fit into.

That hierarchical setup is important because webpage design has dependencies. A local element can only be judged properly in context: a banner image might be high quality, but if it clashes with the page palette or breaks the composition, it hurts the final page. MM-WebAgent is meant to reason about those dependencies instead of making isolated decisions.

The self-reflection part suggests an iterative loop: the agent generates, checks, and revises rather than stopping at the first output. The abstract does not spell out the full algorithmic details, so we should not infer a specific implementation beyond what is stated. But the overall direction is clear: plan globally, generate locally, then refine the whole.

What the paper actually shows

The paper introduces a benchmark for multimodal webpage generation and a multi-level evaluation protocol for systematic assessment. That is a meaningful contribution on its own, because multimodal webpage generation is hard to evaluate with a single score. A page can be visually appealing but structurally weak, or structurally sound but visually inconsistent.

The abstract does not provide benchmark numbers, so there are no concrete metrics to report here. What it does say is that experiments demonstrate MM-WebAgent outperforms code-generation and agent-based baselines, especially on multimodal element generation and integration. That points to the areas where the hierarchical approach seems to help most.

Because the abstract does not include the exact evaluation setup, sample sizes, or quantitative gains, we should be careful not to overstate the result. Still, the direction of the finding is useful: the method appears strongest when the challenge is not just making assets, but making them work together inside a page.

The multi-level evaluation protocol is also worth noting for practitioners. In generated UI, a single pass/fail score often hides where a system is actually failing. A protocol that checks multiple levels can help teams separate layout problems from content problems and integration problems, which is exactly what you want when debugging a generation pipeline.

Why developers should care

If you are building AI-assisted website generators, design copilots, or multimodal UI tooling, this paper points to a practical architecture choice: do not let asset generation happen in isolation. The more your system has to produce a coherent page, the more you need planning, coordination, and revision across levels.

That has direct implementation implications. A pipeline that generates each component independently may be easy to build, but it will likely struggle with coherence. A hierarchical agent can be more complex, but it better matches the structure of the task: first decide the page strategy, then generate the pieces, then check whether they fit together.

- Use global layout decisions before filling in visual details.

- Evaluate generated elements in context, not just on their own.

- Expect iterative refinement to matter when multimodal assets need to align.

- Consider multi-level evaluation if you want to debug failures cleanly.

Limits and open questions

The abstract is promising, but it leaves a lot unsaid. We do not get benchmark numbers, dataset details, or a breakdown of how much each part of the hierarchy contributes. So while the paper claims better performance, the summary available here does not let us judge the size of the improvement or its cost.

There is also an open question around generality. The paper focuses on webpage generation, but it is not clear from the abstract how well the approach transfers to other multimodal design tasks, or how much manual prompting or tuning the agent needs to work well.

Another practical question is runtime and complexity. Hierarchical planning and iterative self-reflection usually improve quality, but they can also increase latency and system complexity. The abstract does not discuss those tradeoffs, so developers should assume there may be a quality-versus-speed balance to manage.

Even with those caveats, the paper’s direction is easy to understand: if you want AI to generate usable webpages, you need the system to think like a designer and a coordinator, not just a content generator. MM-WebAgent is an attempt to make that idea concrete with a hierarchical multimodal agent and a more structured evaluation setup.

For teams working on web generation, the takeaway is not that isolated generation is obsolete, but that it is probably insufficient for polished results. Coherence is a system property, and this paper is about building that property into the generation loop from the start.