Why Multi-Agent Coding Feels Like Distributed Systems

A Hacker News thread argues agentic coding needs staged gates, deterministic checks, and shared state, much like a distributed system.

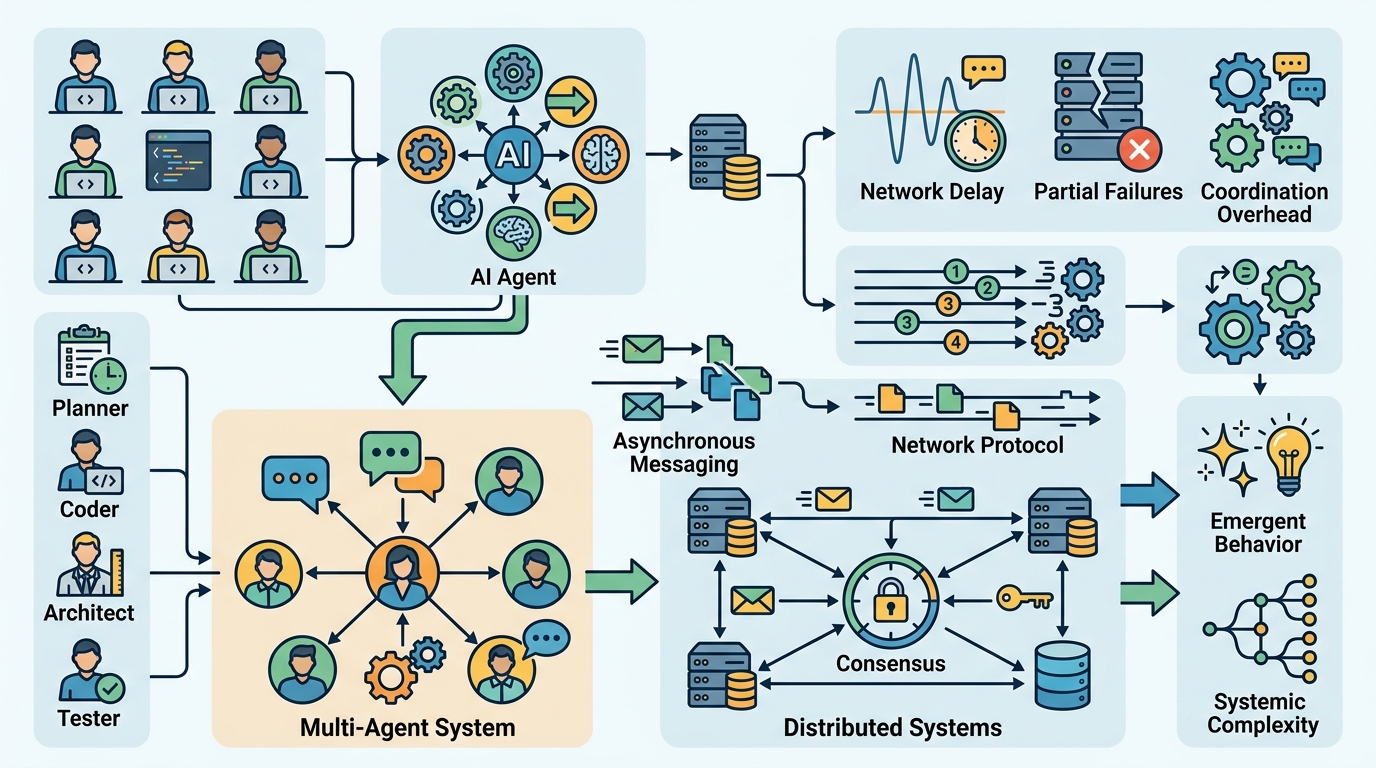

A Hacker News thread with 119 points and 63 comments makes a sharp claim: multi-agent software development behaves like a distributed system. The argument is practical, not academic. If you split work into plan, design, and code stages, then add compile checks, linting, and review gates, you are already coordinating state across independent workers.

That idea matters because agentic coding tools are moving from novelty to workflow. The hard part is no longer getting an LLM to write code. The hard part is getting several model calls, scripts, and human checks to agree on what the software should be at every step.

Why the distributed systems analogy fits

The original post on Hacker News comes from a simple observation: once you add stages and verification gates, the system stops looking like a single “smart” model and starts looking like a protocol. Each stage produces an artifact, and the next stage consumes it. That is shared state, even if the state lives in markdown files, diffs, and test results instead of a database.

The most useful part of the thread is how concrete it gets. One commenter described a workflow with sequential stages, deterministic checks, and an agentic reviewer for qualitative judgment. Another described a DAG-based orchestrator with loops for retries and explicit state tracking. That is software engineering, but with model calls inserted at boundaries where they can be checked.

There is a big reason this framing is catching on: LLMs are unreliable in ways that normal programs are not. A model can misunderstand a prompt, miss a constraint, or drift across a long task. A pipeline with gates does not make the model perfect. It makes failure visible sooner.

- Stages mentioned in the thread: plan, design, code

- Deterministic gates: compile, lint, test, script-based transforms

- Workflow shape: DAG with explicit loops for retries

- Failure assumption in one comment: about 20% task failure rate

That last number is telling. A 20% failure rate sounds bad until you treat agent calls like flaky nodes in a distributed system. Then the question becomes: what can you validate before the next stage sees the output?

Deterministic gates beat wishful thinking

One of the strongest comments in the thread came from Michael Roth, who described a multi-agent pipeline built around hard checks. He wrote that he breaks work into stages with verification gates, and that deterministic checks provide a hard floor of guarantees while agentic checks provide softer, probabilistic ones. That distinction is the whole story.

Roth also says he built his own framework over time, starting with shell scripts and aider, then growing it into a full MCP and CLI setup with YAML-defined stages and gates. That detail matters because it shows how these systems evolve in practice: not as one elegant platform, but as a pile of tools glued together until the workflow becomes repeatable.

"You can’t make the agent reliable on its own, but you can make the protocol reliable by checking at every boundary."

That quote gets to the heart of the engineering tradeoff. If the model is a probabilistic component, then the protocol becomes the product. The more deterministic your boundaries are, the less damage a bad generation can do. In other words, the unit of trust is not the model call. It is the handoff.

This is also why people keep reaching for tests, type checks, and scripts before they reach for a second agent. A script that converts a PDF to text is boring, but boring tools create stable inputs. Stable inputs make the next agent less likely to hallucinate around file formats, missing context, or hidden formatting issues.

How this compares with real orchestration systems

The thread quickly drifts into territory that looks a lot like workflow engines. One commenter mentions Temporal, pointing out that bounded activity timeouts resemble the partial synchrony assumptions used in classic distributed systems theory. That is a useful comparison because it separates infrastructure reliability from semantic reliability.

Temporal can retry an activity and preserve history. It cannot guarantee that a fresh LLM call will produce the same answer twice. That is where the analogy gets sharp: infrastructure can make execution durable, but it cannot make meaning deterministic. The pipeline still needs gates that validate the content itself.

Here is the practical comparison the thread implies:

- Temporal handles retries, history, and workflow state

- OpenAI Codex and similar coding agents generate code, but need external checks

- aider helps with code edits, but still depends on the repo’s tests and lint rules

- Scripts and CI catch format, compile, and dependency failures before a human has to read the diff

That setup is much closer to distributed systems engineering than to the old fantasy of “just ask the model to do it.” The pipeline has explicit inputs, explicit outputs, retries, and validation boundaries. It also has failure modes that look familiar to anyone who has run a service mesh, a job queue, or an eventually consistent data flow.

The difference is that the “messages” in this system are often natural language plans, structured specs, and source code. Each one is a projection of the same intent, but each one can drift. The job of the workflow is to keep those projections aligned long enough to ship something correct.

What builders should take from this thread

The most useful takeaway is not philosophical. It is operational. If you are building with agents, stop asking whether the model is smart enough to do the whole task in one shot. Ask where the task can be split into stages, where each stage can be checked, and where a failure should trigger a reset instead of a human guessing at the cause.

That means more than adding unit tests at the end. It means moving verification earlier, using scripts for deterministic transforms, and treating agent output as an intermediate artifact rather than a final answer. It also means accepting that some tasks will fail and designing the workflow so failed work can be wiped and rerun without contaminating later stages.

For teams experimenting with agentic coding, the best near-term pattern is probably a narrow one: one agent for planning, one for implementation, one for review, with CI as the referee. Keep the interfaces between them boring. Keep the checks strict. Keep the state visible.

If this thread is right, the next real breakthrough in agentic software development will not come from a model that never makes mistakes. It will come from teams that build better protocols around models that still do. The question worth asking now is simple: which parts of your engineering process can be turned into checked handoffs before you let another agent touch them?

Related Articles

The Core Tech Behind Claude Design: Building Design Systems from Your Codebase

Apr 19

OpenAI’s Agents SDK gets safer enterprise controls

Apr 18

NeuBird AI launches Falcon for production ops

Apr 13

Anthropic’s Managed Agents Targets Enterprise AI Work

Apr 10

OpenClaw Memory: how its retrieval system works

Apr 8

Harness Engineering for Long-Running Multi-Agent Systems

Apr 8