SpatialEvo fixes self-training for 3D reasoning

SpatialEvo uses deterministic geometry to turn unlabeled 3D scenes into exact supervision, reducing self-training noise for spatial reasoning.

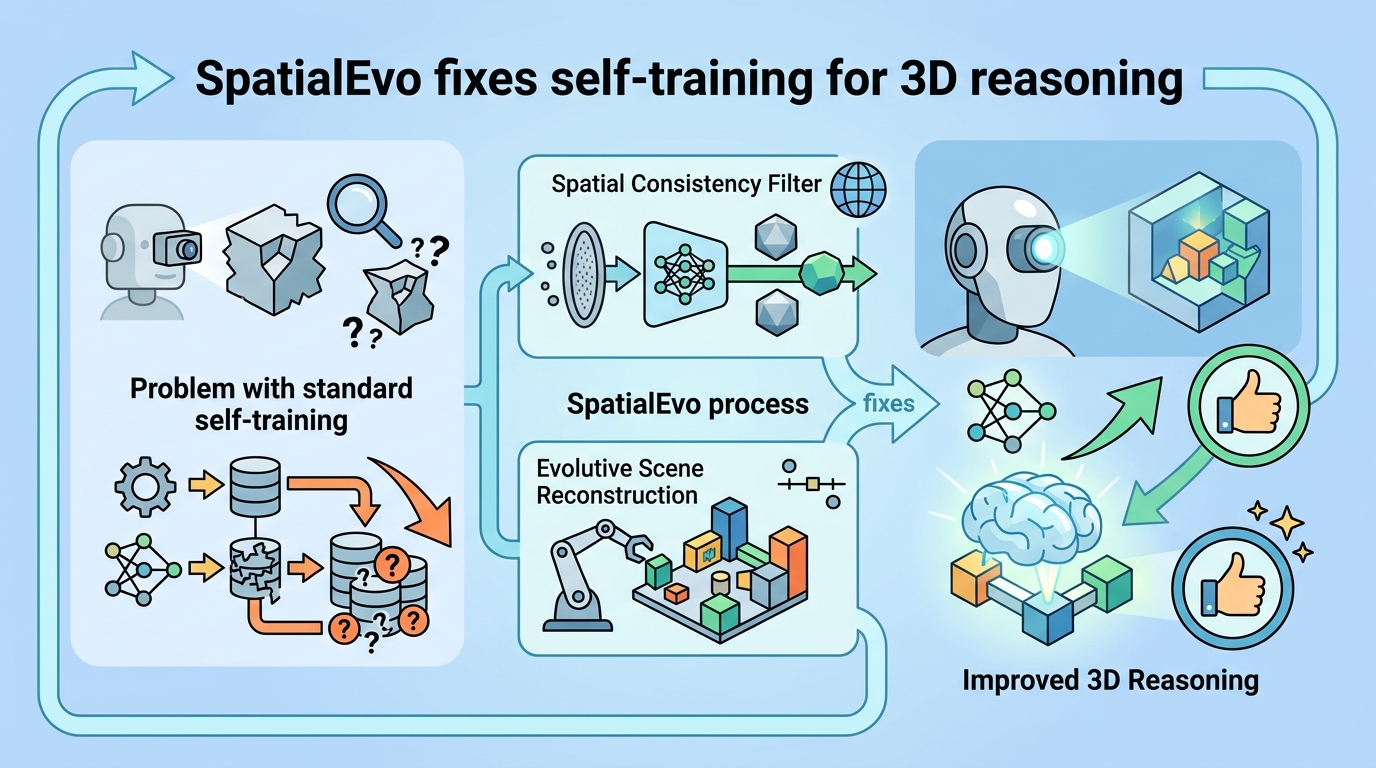

Spatial reasoning in 3D scenes is one of those capabilities that looks simple until you try to build it into an embodied system. The problem, according to SpatialEvo: Self-Evolving Spatial Intelligence via Deterministic Geometric Environments, is that continuous improvement usually depends on expensive geometric annotation or on self-training loops that can end up reinforcing their own mistakes.

SpatialEvo proposes a different path: if the scene geometry is known, then the correct answer to many spatial questions is not a guess or a consensus vote, but a deterministic consequence of the geometry itself. That lets the system generate exact supervision from point clouds and camera poses, without asking another model to label the data first.

What problem this paper is trying to fix

The paper starts from a practical bottleneck familiar to anyone working on embodied intelligence or 3D vision: spatial reasoning models need lots of supervision, but geometric annotation is costly. In a self-evolving setup, models can train on pseudo-labels they generate themselves, but that creates a second problem. If the model is already wrong about geometry, then consensus-based pseudo-labeling can preserve those errors instead of correcting them.

That matters more in 3D than in many other settings because spatial relations are tied to physical structure. If a model confuses left/right, occlusion, distance, or relative position, the error is not just semantic noise; it breaks the logic of navigation, manipulation, and scene understanding pipelines built on top of it.

The key claim in the paper is that 3D spatial reasoning has a special property that makes this self-training failure avoidable: ground truth can be computed exactly from the scene geometry. In other words, if you have point clouds and camera poses, you can derive the answer directly instead of relying on model agreement.

How SpatialEvo works in plain English

SpatialEvo is built around something the paper calls the Deterministic Geometric Environment, or DGE. The DGE formalizes 16 spatial reasoning task categories and applies explicit geometric validation rules. In practice, that turns unannotated 3D scenes into what the paper describes as zero-noise interactive oracles.

That phrase is worth unpacking. Instead of using a model to invent labels for training, the environment itself checks whether a question is physically valid and whether an answer is correct according to the underlying geometry. So the training signal comes from objective physical feedback, not from model consensus.

The system uses a single shared-parameter policy that co-evolves in two roles: questioner and solver. The questioner generates spatial questions grounded in scene observations, but only if those questions are physically valid under the DGE constraints. The solver then answers those questions using the DGE-verified ground truth.

There is also a task-adaptive scheduler. Rather than relying on a manually designed curriculum, the scheduler focuses training on the model’s weakest categories. That means the training distribution shifts toward the tasks where the model is underperforming, which is a straightforward way to spend more compute where it is most needed.

- DGE: deterministic validation from geometry, not model votes

- 16 task categories: explicit spatial reasoning buckets

- Shared policy: one model plays both questioner and solver

- Adaptive scheduler: emphasizes weak categories automatically

What the paper actually shows

The abstract says the authors ran experiments across nine benchmarks. It does not provide the benchmark names or detailed per-benchmark numbers in the source text here, so those specifics are not available from the abstract alone.

What the paper does claim is that SpatialEvo reaches the highest average score at both 3B and 7B model scales. It also reports consistent gains on spatial reasoning benchmarks, while avoiding degradation on general visual understanding.

That last part is important. A common fear with specialized training is that it improves one narrow capability by damaging broader performance. According to the abstract, SpatialEvo avoids that tradeoff, at least in the reported experiments.

Because the source material does not include the exact metrics, the safest reading is that the paper demonstrates a strong relative improvement rather than a fully quantified one in the abstract itself. Engineers evaluating the method would still want the full paper for benchmark-by-benchmark results, ablations, and implementation details.

Why developers should care

If you are building agents that need to understand rooms, objects, layouts, or camera-based scene structure, the main appeal here is data efficiency with cleaner supervision. SpatialEvo suggests you can get better spatial reasoning training signals without paying the annotation tax for every scene.

It also offers a more reliable alternative to pseudo-labeling in domains where the environment can be checked deterministically. That is a useful design pattern: when the world already contains exact structure, use it as the teacher instead of asking the model to grade itself.

For embodied AI systems, robotics stacks, and 3D assistants, this could mean a training loop that is more stable and less self-reinforcing in its errors. The adaptive scheduler is also practical: if a model is weak on some spatial categories, the system automatically pushes more training toward those categories instead of requiring manual curriculum tuning.

Limitations and open questions

The biggest limitation visible from the abstract is scope. SpatialEvo is specifically about 3D spatial reasoning under deterministic geometric conditions. That makes it promising for scene understanding, but it does not automatically solve broader reasoning problems where ground truth is not derivable from geometry.

The abstract also does not tell us how the 16 task categories are defined, how the geometric validation rules are implemented, or how sensitive the method is to imperfect point clouds and camera poses. Those details matter in real systems, because sensor noise and reconstruction errors are part of everyday deployment.

Another open question is generalization. The paper says there is no degradation on general visual understanding, but the abstract does not explain how broad that general understanding is or how the method behaves outside the nine evaluated benchmarks.

Still, the core idea is strong and fairly practical: if your training domain has deterministic structure, you may not need noisy pseudo-labels at all. SpatialEvo turns that idea into a self-evolving loop for 3D spatial intelligence, and that is exactly the kind of method developers should watch if they work anywhere near embodied perception or geometric reasoning.