Why Jensen Huang Is Wrong About AGI Being Achieved

Jensen Huang is wrong: today’s AI is impressive, but it is not AGI, and calling it that only muddies the public debate.

Jensen Huang is wrong: today’s AI is impressive, but it is not AGI.

His own words make the problem obvious. On Lex Fridman’s podcast, Huang said, “I think we’ve achieved AGI,” then, minutes later, admitted that “the probability that 100,000 of those agents build NVIDIA is zero percent.” That is not a minor clarification. It is a confession that the system he called AGI cannot do the work of a single real executive at scale, cannot sustain a company, and cannot reliably carry responsibility across time. If a system is only “general” when it briefly produces a viral app, then the term no longer means anything useful. It becomes marketing.

First argument: Huang’s definition is far too small

The first reason his claim fails is simple: Huang is using a narrow success story to stand in for general intelligence. A model that can generate code, draft copy, or help launch a product is useful. A system that can reason across domains, adapt to new conditions, and handle open-ended goals is something else entirely. Huang’s example of an AI that could create a hit app, generate revenue, and then disappear is not evidence of AGI. It is evidence of a strong tool operating inside a constrained environment.

The contrast with accepted definitions is stark. In Artificial Intelligence: A Modern Approach, AGI is framed as a system that can “understand, learn, and perform any intellectual task that a human being can perform.” OpenAI’s own public definition is “highly autonomous systems that outperform humans at most economically valuable work.” Those are serious thresholds. By those standards, current models are not AGI. They are better described as advanced narrow systems with broad surface area and deep limits.

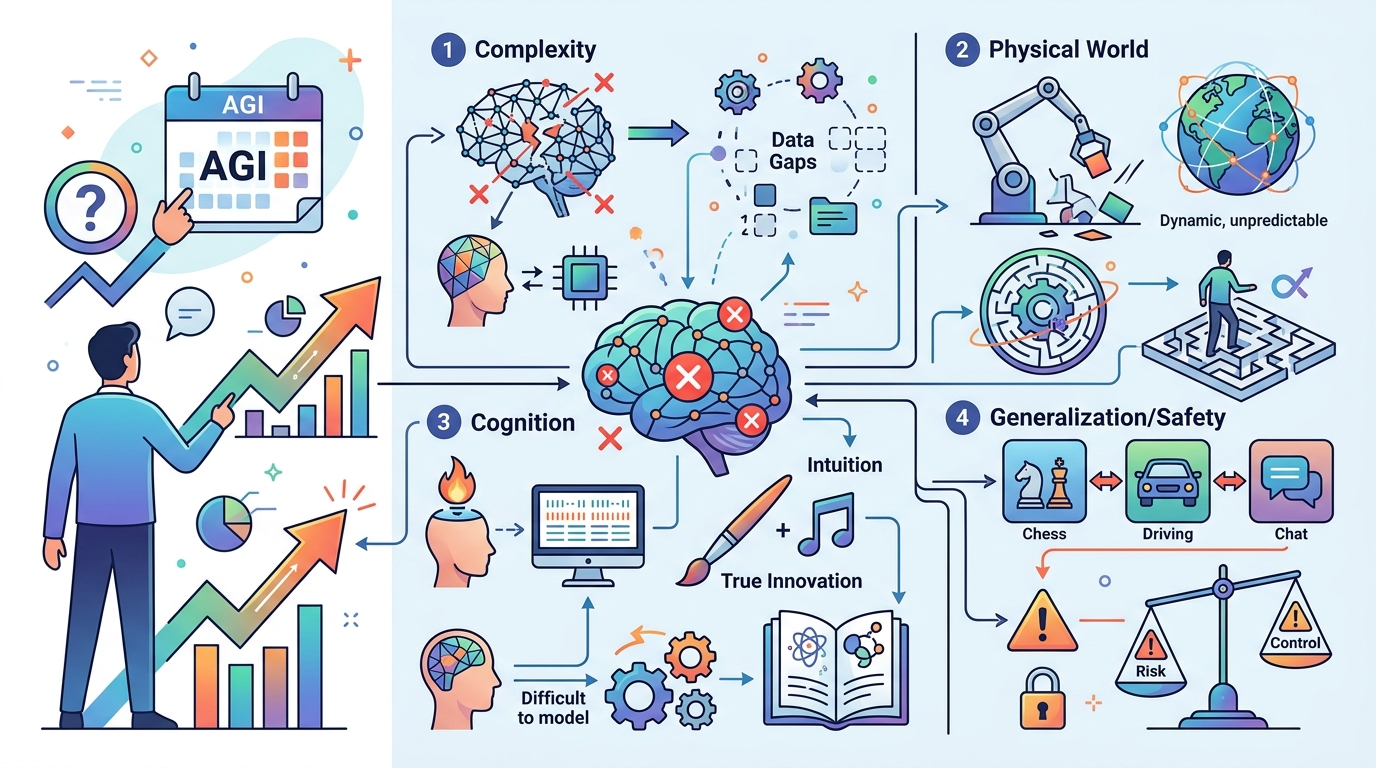

Second argument: the evidence of failure is still everywhere

If AGI were here, we would not still be talking about hallucinations, brittle reasoning, and poor long-horizon planning as core product risks. Yet those are exactly the failure modes that define today’s systems. They can ace exams, summarize large bodies of text, and write competent code in familiar patterns. They still struggle with causal reasoning, stable memory, real-world grounding, and consistent decision-making over time. That is not a small gap. It is the gap between a powerful assistant and a general intelligence.

The research community knows this. Yann LeCun has argued that scaling large language models alone will not produce human-level intelligence and that world models are needed instead. Gary Marcus has repeatedly said LLMs are not remotely close to AGI. Even Satya Nadella has said we are not even close. The disagreement is not about whether AI is useful. It is about category error. Calling a system AGI because it can impress a user for a few minutes is like calling a calculator a mathematician because it can solve an equation faster than a human.

The counter-argument

The strongest defense of Huang’s claim is that AGI has always been a moving target. Humans do not possess magical, unified intelligence either. We are specialized, brittle, and dependent on tools. In that sense, a system that can write, code, search, summarize, and reason across many tasks may already qualify as “general enough” for practical use. From this angle, insisting on a perfect human replica is a trap. It delays recognition of a real milestone because the milestone does not look like science fiction.

There is also a pragmatic argument. If a model can perform a wide range of economically valuable work, even imperfectly, then businesses will treat it as AGI in practice. Markets, policy, and labor all respond to capability, not metaphysics. If the point of the label is to mark a threshold where AI becomes transformative, then Huang’s supporters can argue that the threshold has been crossed already.

That case fails because terminology matters when money, contracts, and public policy are on the line. If AGI is redefined downward until it means “very useful software,” then the term stops signaling a real technological shift. It becomes a rhetorical device. The practical threshold for business automation is not the same thing as artificial general intelligence, and collapsing the two only inflates expectations while hiding real limitations. We should absolutely say current AI is transformative. We should not pretend that transformation is the same as AGI.

What to do with this

If you are an engineer, PM, or founder, stop using AGI as a marketing shortcut and start using capability-based language instead. Describe what the model can do, where it fails, how often it hallucinates, and what human oversight remains necessary. Build for narrow reliability, not vague generality. If you are making product or investment decisions, treat today’s models as powerful but bounded systems, and plan for supervision, verification, and rollback. The right move is not to argue about whether AGI has arrived. The right move is to design as if it has not.

Related Articles

Jensen Huang’s AI warning is really about coworkers

Apr 25

Why Jensen Huang Is Wrong About AGI

Apr 25

Why GPT Image 2 Matters More Than Another AI Image Launch

Apr 24

Anthropic and Amazon lock in 5GW for Claude

Apr 24

Why enterprises should stop treating Codex like a pilot project

Apr 24

Why the Mythos rollout is a mistake

Apr 24