Why Prompt Standards Matter for AI Work

A new Springer chapter argues prompt engineering needs shared standards to cut token waste, reduce errors, and improve AI accountability.

One prompt can burn through dozens of tokens, trigger a wrong answer, or save real money at scale. That is why Hamid Tavakoli’s new Springer Nature chapter, Why Prompt Engineering Standards Matter, treats prompting as a discipline, not a casual chat trick.

The chapter lands at the right time. As teams move from one-off chatbot experiments to production systems, the difference between a vague prompt and a structured one starts to show up in cost, quality, compliance, and user trust.

What Tavakoli is really arguing is simple: if prompts shape model behavior, then prompts need rules, shared vocabulary, and a repeatable structure. That idea sounds obvious after you hear it, but the industry has spent years acting like prompt writing is a side skill instead of part of system design.

Why prompts are more than text

Tavakoli frames prompts as structured design instruments. That matters because large language models do not infer intent the way humans do. They react to wording, ordering, constraints, examples, and context length. A prompt is closer to an interface spec than a message to a friend.

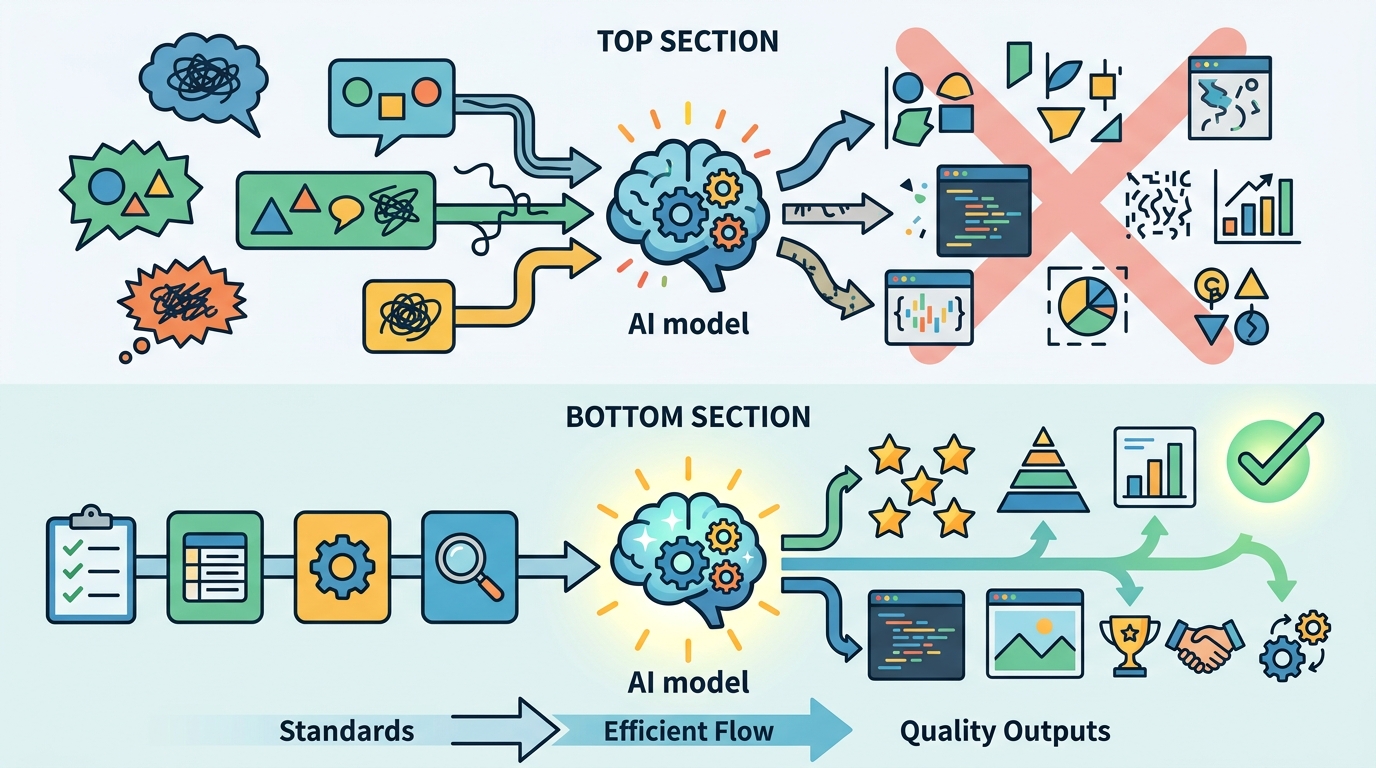

The chapter also links poor prompting to measurable waste. Vague instructions can cause longer outputs, extra retries, and more token consumption. In a product with millions of monthly interactions, that waste turns into real cloud spend and more energy use.

There is also a quality problem. When a prompt leaves out role, task, format, or constraints, the model fills gaps on its own. Sometimes that guess is fine. Often it produces answers that are too generic, too verbose, or simply off target.

- Prompt structure affects output length, accuracy, and consistency.

- Token waste grows fast when teams rely on trial-and-error prompting.

- High-stakes use cases need explicit instructions, not vague intent.

- Shared prompt patterns make audits and reviews easier.

This is where the chapter’s standards argument gets practical. A standard does not need to be rigid to be useful. It just needs to give teams a common way to describe the same thing, so prompts can be reviewed, tested, and improved without guesswork.

That view lines up with what prompt engineers at OpenAI, Anthropic, and Google AI have been saying in practice for a while: clear instructions usually beat clever wording. The chapter gives that instinct a more formal frame.

The ethics problem hiding inside prompts

Prompting is not just about getting better answers. It is also about responsibility. Tavakoli points out that poorly written prompts can create misinterpretation in high-stakes settings, which matters in healthcare, law, education, and public services where a model’s output can influence human decisions.

That is a big deal because prompt mistakes are often invisible. A model can sound confident while missing the user’s intent by a mile. If the prompt is underspecified, the failure may look like model hallucination when the real issue is human ambiguity.

For that reason, the chapter treats prompt literacy as a form of digital literacy. If people are going to work with AI systems every day, they need to understand how instructions shape behavior and where responsibility sits when things go wrong.

“The future of AI is not about replacing humans, it’s about augmenting human capabilities.” — Satya Nadella

That quote from Microsoft CEO Satya Nadella fits the chapter’s point well. Augmentation only works when the human side is clear enough for the machine to follow. Prompt standards are one way to make that relationship less accidental.

The ethical angle also changes how teams should think about prompt libraries, templates, and review workflows. If a prompt can affect safety, then it should be treated like other production artifacts: documented, tested, and versioned.

What a standard can actually fix

The chapter introduces the Prompt Anatomy Blueprint, a human-centered framework for structured prompt design. The name is new, but the underlying idea is familiar to anyone who has written good specs: define the task, define the context, define the output format, and define the constraints.

That kind of structure can reduce the back-and-forth that makes AI tools feel flaky. It also makes prompts easier to compare across teams, which is a big deal for organizations that want to measure quality instead of arguing about vibes.

Here is the part that matters operationally: standards make prompts testable. Once a prompt has a known structure, teams can run A/B tests, track token counts, and measure how often the model follows instructions.

- OpenAI’s prompting guidance emphasizes specificity and clear formatting.

- Anthropic’s prompt engineering docs push developers toward explicit role and task definition.

- Google’s Gemini prompting docs focus on instruction clarity and examples.

- Prompt Engineering Guide collects patterns that teams can adapt into repeatable workflows.

Those sources point in the same direction, even if they use different language. Good prompts are structured, specific, and easy to reuse. Tavakoli’s chapter adds the missing argument that this should be standardized, not left to individual style.

If you want a useful comparison, think about software engineering before and after unit tests became normal. Teams still wrote code before tests, but the practice changed once quality became measurable. Prompt standards could do something similar for AI work by turning prompt quality into something teams can inspect instead of guess at.

Why this matters for the next wave of AI tools

The chapter is part of a broader shift in how people use AI. Early adoption was all about novelty. Now the pressure is on reliability, cost control, and governance. That is why prompt engineering is moving from a clever trick to a documented workflow.

For product teams, the payoff is concrete. Better prompts can reduce retries, improve answer relevance, and cut the number of tokens sent to paid models. For enterprises, the gain is even bigger because standards make it easier to train new staff and keep outputs consistent across departments.

My read is that prompt standards will matter most in organizations that already care about documentation. If your team has style guides, API contracts, and review checklists, prompt structure will fit right in. If your team still treats prompts like ad hoc messages, the chapter is a warning shot.

There is also a competitive angle. The teams that document prompt patterns now will have an easier time comparing models later, especially as companies mix systems from OpenAI, Anthropic, and Google DeepMind. Without a standard prompt format, model evaluation turns into a mess of inconsistent inputs.

That is why this chapter matters beyond academia. It is pushing the field toward a simple but demanding idea: if AI output depends on input quality, then prompt quality needs the same discipline we already expect from code, design, and documentation.

What teams should do next

If you are building with AI today, Tavakoli’s chapter points to a practical next step: stop treating prompts like disposable text. Write them like reusable assets. Give them a purpose, a structure, a test case, and a review owner.

The specific prediction I would make is this: within the next year, more AI teams will add prompt templates, prompt QA checks, and internal prompt style guides to their release process. The teams that do it early will spend less time fixing avoidable model errors and more time improving the product itself.

So the real question is no longer whether prompt engineering matters. It is whether your team will keep improvising, or start treating prompts like part of the system that deserves standards.

Related Articles

Prompt Engineering Is Becoming Infrastructure

Apr 21

Mythos, Anthropic’s unreleased AI model, explained

Apr 21

LLMs plus knowledge graphs for ML explainability

Apr 20

Autoencoders for stochastic dynamics get geometric regularization

Apr 20

ASMR-Bench Tests Sabotage Detection in ML Code

Apr 20

OpenAI pushes GPT-5.4-Cyber into security work

Apr 19