Safe Continual RL for Changing Real-World Systems

This paper studies how to keep RL controllers safe while they adapt to non-stationary systems—and shows why existing methods still fall short.

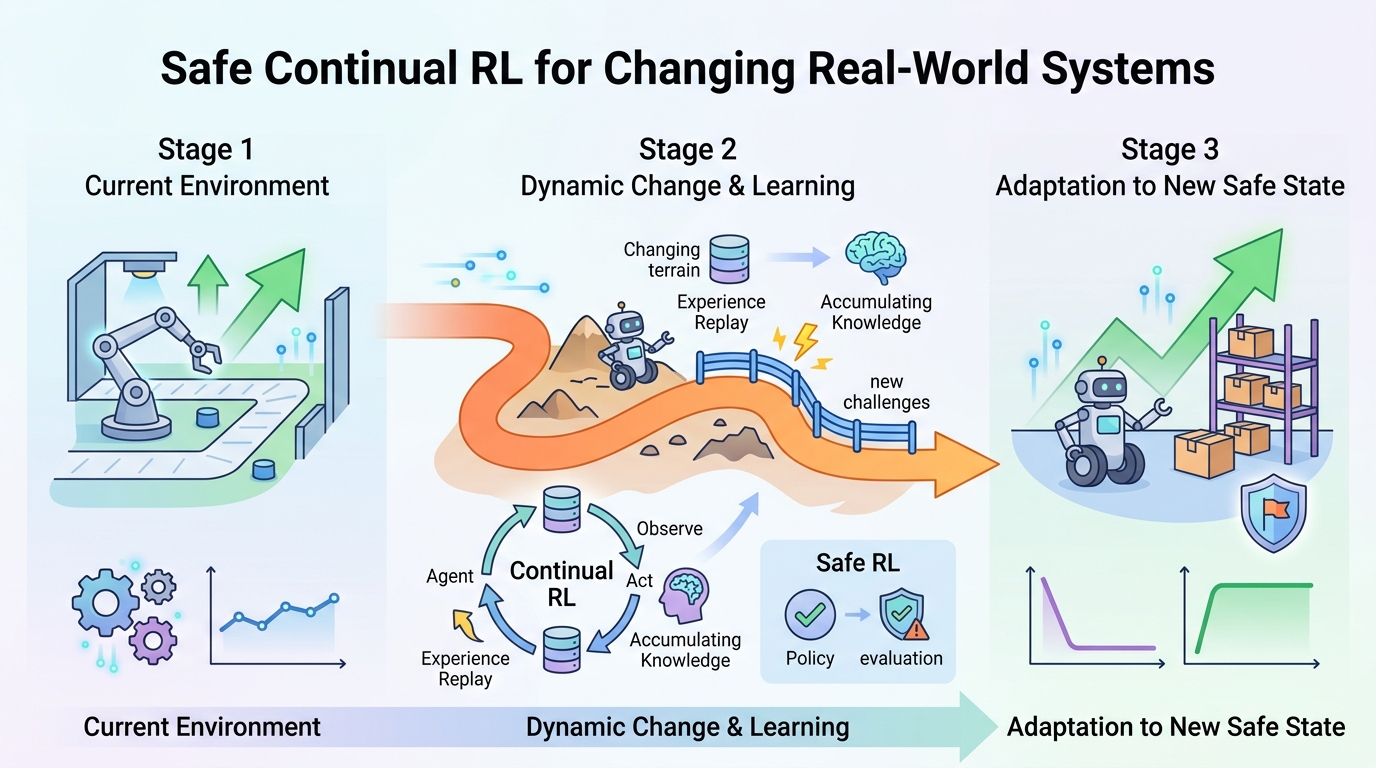

Reinforcement learning is attractive for control problems because it can learn directly from data when accurate physical models are missing. But in real deployments, the environment can change over time, and that breaks the common assumption that the world stays stationary. This paper, Safe Continual Reinforcement Learning in Non-stationary Environments, looks at what happens when you need both adaptation and safety at the same time.

The core message is simple: safe RL and continual RL each solve part of the problem, but their overlap is still poorly understood. The authors argue that if a controller is learning in a physical system, transient safety violations during adaptation are not acceptable. That makes the usual trade-off between learning quickly and staying safe much more than a theoretical concern.

What problem this paper is trying to fix

Most control-oriented RL methods assume the system behaves the same way throughout training and deployment. That is a bad fit for real-world settings where dynamics and operating conditions can shift unexpectedly. A controller that worked yesterday may face different failure modes today, and a policy that keeps improving in one regime can forget how to behave safely in another.

The paper focuses on two requirements that are often studied separately. Safe RL tries to keep the agent within safety constraints. Continual RL tries to keep the agent learning across tasks or changing conditions without catastrophic forgetting. The missing piece is a method that does both at once in non-stationary environments.

That matters for engineers because the failure mode is not just lower reward. In physical systems, unsafe exploration can mean broken equipment, wasted energy, service outages, or worse. The paper treats those as first-class constraints rather than side effects.

How the method works in plain English

This is primarily a systematic study rather than a single new algorithm. The authors introduce three benchmark environments designed to capture safety-critical continual adaptation. They then evaluate representative approaches drawn from safe RL, continual RL, and combinations of the two.

In plain terms, they are asking: when the environment changes, can existing methods adapt without forgetting too much, and can they do it while staying inside safety limits? By comparing methods across these benchmarks, the paper maps out the tension between the two goals instead of assuming one family of techniques will solve both.

The paper also examines regularization-based strategies. These are methods that try to reduce how much a model changes when new data arrives, which can help preserve previous behavior. In a continual learning setting, that can reduce catastrophic forgetting. In a safety setting, the hope is that more conservative updates will avoid dangerous swings in behavior.

But the authors do not present regularization as a silver bullet. Their framing suggests these techniques can partially improve the trade-off, not eliminate it. That distinction is important for anyone thinking about deploying RL in a live control loop.

What the paper actually shows

The main empirical finding is that there is a real tension between maintaining safety constraints and avoiding catastrophic forgetting under non-stationary dynamics. The paper reports that existing methods generally fail to achieve both objectives simultaneously across the studied settings.

That is a strong result, but the abstract does not provide benchmark numbers, success rates, or exact constraint-violation statistics. So the right takeaway is qualitative rather than numeric: the problem is hard, current methods are not enough, and the benchmarks expose that gap clearly.

The paper also says the regularization-based strategies they examine can partially mitigate the trade-off. The abstract does not spell out which specific regularizers are used or how much improvement they deliver, so the safest reading is that they offer some stability benefits while still leaving open the core challenge of safe adaptation over time.

- Three benchmark environments were introduced for safety-critical continual adaptation.

- Representative safe RL, continual RL, and hybrid approaches were evaluated.

- Existing methods generally did not satisfy safety and anti-forgetting goals together.

- Regularization helped somewhat, but only partially.

Why developers should care

If you build learning-based controllers for robots, industrial systems, autonomous agents, or other physical environments, this paper is a warning against treating safety and adaptation as separate engineering problems. A controller that is safe only before the environment changes is not enough. A controller that adapts well but occasionally violates constraints is also not enough.

The practical value of the paper is that it gives the field a clearer testing ground. Benchmarks matter because they shape what gets optimized and what gets ignored. By introducing environments that specifically stress both continual adaptation and safety, the authors help surface failure modes that standard stationary benchmarks can hide.

For practitioners, the paper also suggests a design mindset: conservative updates and regularization may help, but they are unlikely to fully solve safe continual RL on their own. If your system has hard safety requirements, you should assume that non-stationarity will complicate both training and deployment, and plan for monitoring, fallback behavior, and explicit safety checks.

Limitations and open questions

The paper is honest about the state of the field: the intersection of safe RL and continual RL remains comparatively unexplored. That means the benchmarks and evaluation in this work are valuable, but they are not a finished solution.

One limitation is that the abstract only describes the evaluation at a high level. It does not give concrete metrics, benchmark sizes, or implementation details in the source material provided here. So while the paper clearly identifies the trade-off, it does not, from the abstract alone, tell us which method is best in which setting or how close any approach gets to a deployable solution.

The bigger open question is how to build controllers that can keep learning for the lifetime of a system without ever taking unsafe actions during adaptation. The paper closes by pointing toward that challenge directly, framing safe, resilient learning-based control in changing environments as an open research direction rather than a solved problem.

For engineers, that is the useful takeaway: if your application lives in the real world, stationarity is an assumption you probably cannot trust. This paper shows that once safety is non-negotiable, continual learning becomes a much harder systems problem, and the current toolbox still does not fully cover it.

Related Articles

Random Neural Nets Show Phase-Shifted Fluctuations

Apr 22

Why “edge of stability” can help generalization

Apr 22

Bounded Ratio RL Reframes PPO's Clipped Objective

Apr 21

Sessa puts attention inside state-space memory

Apr 21

MathNet Benchmarks Math Reasoning and Retrieval

Apr 21

Prompt Engineering Is Becoming Infrastructure

Apr 21