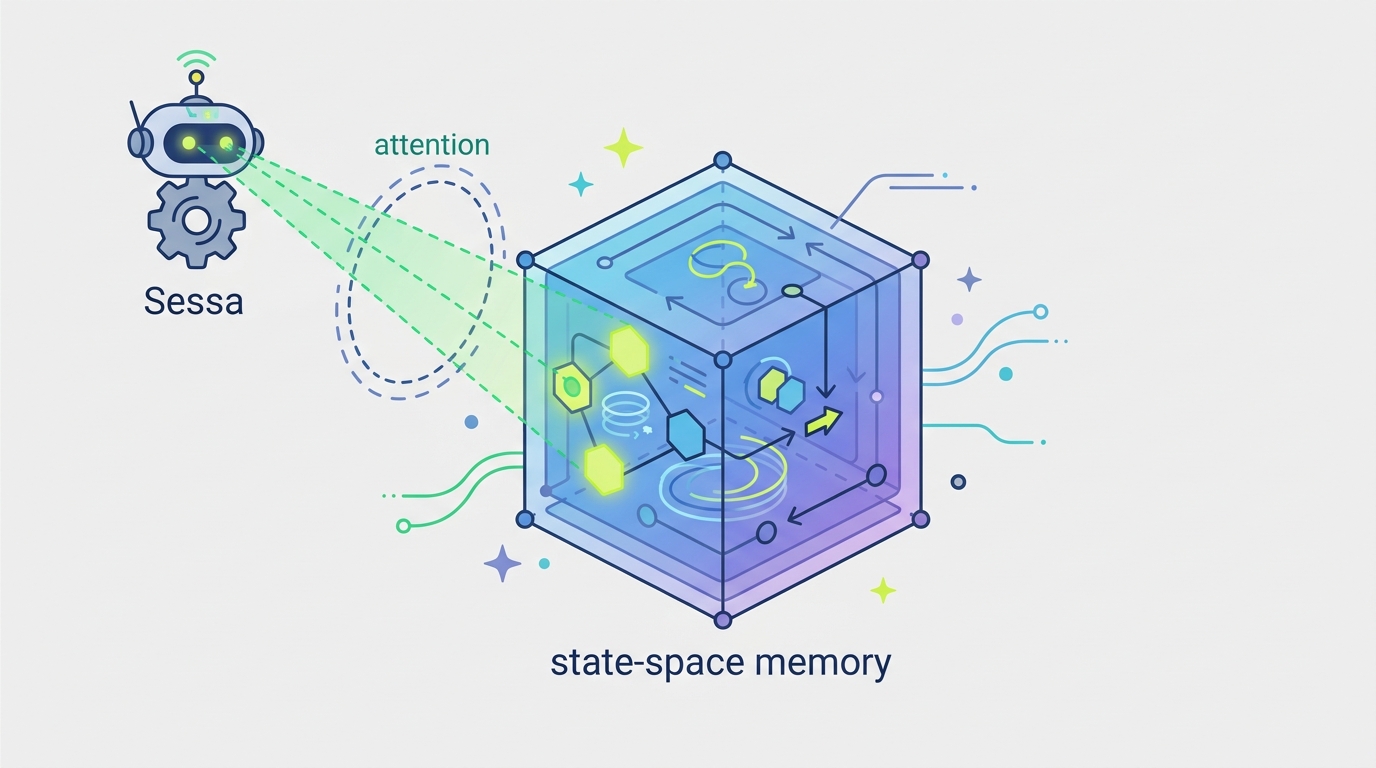

Sessa puts attention inside state-space memory

Sessa mixes attention with recurrent state-space feedback to improve long-context recall, with power-law memory tails and strong benchmark results.

Modern sequence models usually force you to choose between two imperfect tools: Transformers can look back at context directly, but weak retrieval spreads influence thin; state-space models propagate information efficiently, but their long-range memory can fade fast. Sessa: Selective State Space Attention tries to break that tradeoff by putting attention inside a feedback path, so a model can both retrieve and keep updating information over time.

For developers building long-context systems, the practical question is simple: how do you keep old tokens relevant without paying the full cost of a giant attention window or watching memory decay into irrelevance? This paper argues that the answer may be a decoder that supports recurrent many-path aggregation within a layer, rather than relying on a single read from the past or a single feedback chain.

What problem this paper is trying to fix

The paper starts from a familiar failure mode in attention-based models. When retrieval is not sharp and attention becomes diffuse over an effective support set, the influence of any one token gets diluted. In the abstract’s framing, that dilution typically scales as O(1/S_eff(t)), and in full-prefix settings it can fall to O(1/ℓ) for older tokens.

That means the farther back a token is, the less it matters unless the model can focus very precisely on it. In practice, this is one reason long-context behavior can be brittle: the model may technically “see” the token, but its contribution is too spread out to matter much.

On the other side, structured state-space models process sequences recurrently through an explicit feedback path. Selective variants such as Mamba make that feedback input-dependent, which helps, but the abstract says long-range sensitivity still decays exponentially with lag when freeze time cannot be sustained over long intervals.

So the paper is trying to fix a structural limitation in existing architectures. Transformers retrieve from the past in a single read. State-space models propagate information through a single feedback chain. Sessa is positioned as a way to combine the strengths of both without inheriting only their weaknesses.

How Sessa works in plain English

The core idea is to place attention inside a feedback path. That sounds abstract, but the intuition is straightforward: instead of treating attention as a one-off lookup over history, Sessa uses it as part of the recurrent mechanism that carries information forward.

The paper describes this as enabling “recurrent many-path aggregation within a layer.” In plain terms, information can be collected and mixed through multiple routes as the sequence evolves, rather than being forced through a single memory channel. That gives the model more flexibility in how it preserves and routes past signals.

Under the stated assumptions in the abstract, this design admits a power-law memory tail in lag ℓ of order O(ℓ^-β) for 0 < β < 1. That matters because it is asymptotically slower than 1/ℓ, so old information can remain influential for longer than in the diffuse-attention regime described earlier.

The abstract also says this rate is tight in an explicit diffuse uniform-routing setting, where the influence is Θ(ℓ^-β). In other words, the paper is not just claiming a loose upper bound; it says the scaling behavior is matched by a concrete routing setup under the same assumptions.

What the paper actually shows

There are two kinds of evidence in the abstract: theoretical claims and empirical results. On the theory side, the paper claims Sessa can realize regimes with power-law memory tails, and that this is slower-decaying than the O(1/ℓ) behavior associated with old tokens in full-prefix attention settings.

It also claims that, under the same conditions, only Sessa among the compared model classes realizes flexible selective retrieval, including non-decaying profiles. That is a strong statement about expressivity: the architecture is meant not just to remember longer, but to support different retention profiles depending on the task.

On the empirical side, the abstract says that under matched architectures and training budgets, Sessa achieves the strongest performance on the paper’s long-context benchmarks while remaining competitive with Transformer- and Mamba-style baselines on short-context language modeling. The abstract does not provide the benchmark names or any concrete numeric scores, so there are no published numbers here to compare.

That limitation matters. Without the full paper, you can’t tell how large the long-context gains are, how the benchmarks were constructed, or whether the advantage depends on specific hyperparameters. But the claim is still useful: the model is presented as improving long-context behavior without obviously sacrificing short-context language modeling.

Why developers should care

If you build retrieval-heavy assistants, code agents, summarizers, or any system that has to track state across long inputs, the architecture question is not academic. You want memory that is both selective and stable. A model that forgets too quickly loses context; a model that attends too diffusely wastes capacity on noise.

Sessa is interesting because it tries to move beyond the usual either/or choice. By embedding attention into recurrent state-space feedback, it suggests a path toward models that can keep useful details alive for longer while still deciding, based on input, what deserves to be carried forward.

For engineers, the practical takeaway is not “replace everything with Sessa tomorrow.” The abstract does not show deployment costs, latency numbers, or implementation complexity. But it does point to a design direction worth watching if your workload depends on long-range dependency tracking rather than just next-token fluency.

Limitations and open questions

The source material gives a promising picture, but it leaves several important questions unanswered. The abstract does not include benchmark names, dataset details, parameter counts, runtime costs, or memory usage. It also does not explain how Sessa compares on efficiency at scale, which is crucial if you care about production inference.

There is also a gap between theoretical memory behavior and real-world model quality. A power-law tail is interesting, but it does not automatically guarantee better reasoning, better retrieval, or better downstream task performance across the board. The paper’s empirical claim is encouraging, but the abstract alone is not enough to judge robustness.

Still, the central idea is clear: if attention is too diffuse and state-space memory decays too fast, maybe the answer is to let attention participate in the recurrence itself. That gives Sessa a distinct place in the design space between Transformer-style reading and Mamba-style propagation.

- Transformers: direct retrieval, but diffuse attention can dilute old information.

- Selective state-space models: efficient recurrent propagation, but long-range sensitivity can decay exponentially.

- Sessa: attention inside feedback, aiming for selective retrieval plus slower memory decay.

For now, that makes Sessa a paper to watch if you care about long-context architecture design. The abstract suggests a meaningful theoretical advance and a promising empirical result, but the missing details mean practitioners should treat it as an early signal rather than a settled answer.

Related Articles

AVISE tests AI security with modular jailbreak evals

Apr 23

Parallel-SFT aims to make code RL transfer better

Apr 23

SpeechParaling-Bench tests speech models on nuance

Apr 23

Safe Continual RL for Changing Real-World Systems

Apr 22

Random Neural Nets Show Phase-Shifted Fluctuations

Apr 22

Why “edge of stability” can help generalization

Apr 22