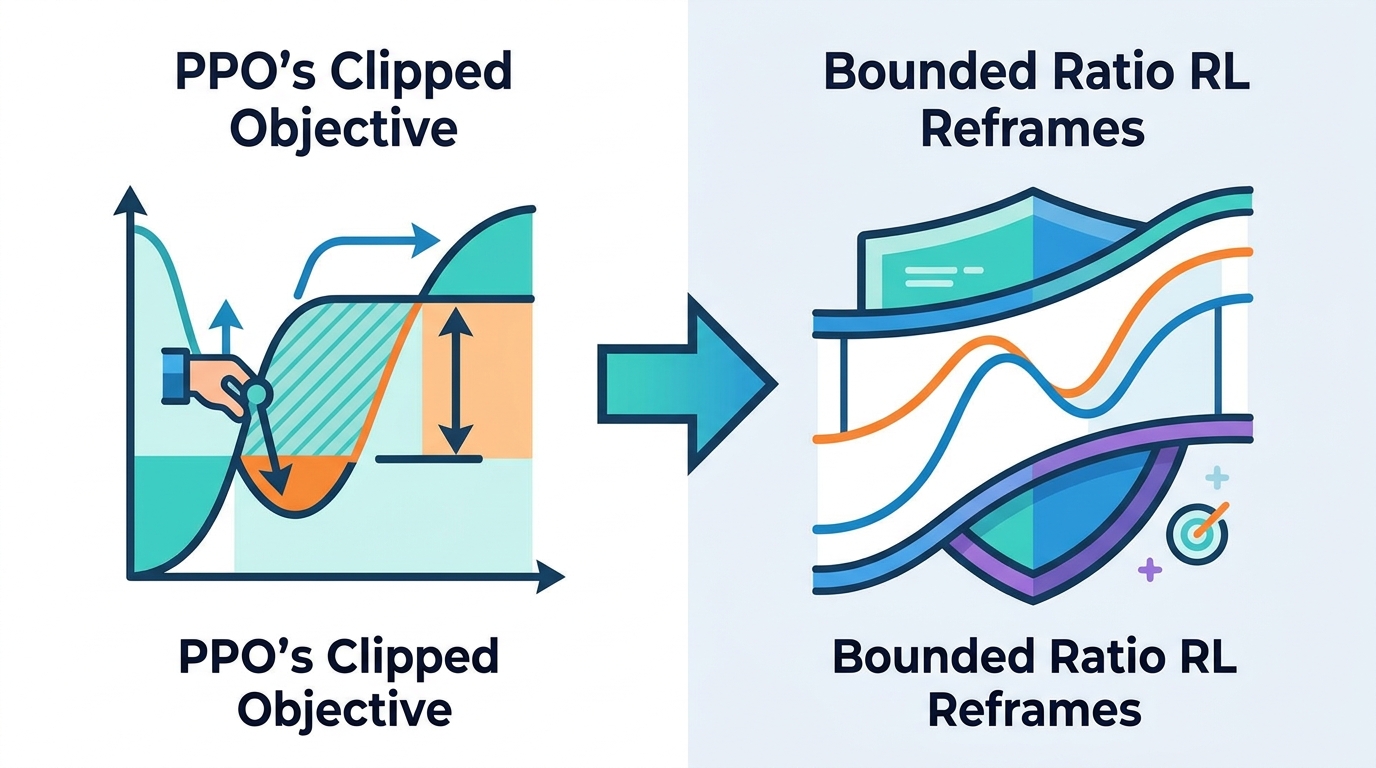

Bounded Ratio RL Reframes PPO's Clipped Objective

BRRL gives PPO a cleaner theory, with BPO and GBPO aiming for more stable policy updates in control and LLM fine-tuning.

Reinforcement learning practitioners already know PPO works well in practice, but its clipped objective has always felt a bit like a useful heuristic with a fuzzy theoretical story. Bounded Ratio Reinforcement Learning tries to close that gap by turning the policy-update ratio into something that is explicitly bounded, then deriving an optimization method from that setup.

The practical pitch is simple: if you care about stable policy updates, especially in on-policy RL or LLM fine-tuning, this paper argues you can get a more principled version of the same idea PPO is chasing. The authors claim the resulting methods, BPO and GBPO, generally match or outperform PPO and GRPO in stability and final performance, but the paper is careful to frame this as an empirical observation rather than a universal guarantee.

What problem this paper is trying to fix

PPO has become the default on-policy RL algorithm in many settings because it scales well and tends to behave robustly across domains. The issue, as the paper sees it, is that PPO’s clipped objective is not a clean expression of the trust-region ideas that originally motivated it. In other words, the algorithm is popular, but the mathematical bridge between the theory and the implementation has been shaky.

That disconnect matters for engineers because RL systems are often fragile. If you are tuning policies for robotics, games, or language models, you want updates that improve performance without blowing up training. A heuristic that works most of the time is useful, but a method with a clearer performance guarantee is easier to reason about, debug, and extend.

The paper positions this as more than a cosmetic theory upgrade. It argues that the clipped PPO loss can be interpreted through a new lens, and that a more explicit bounded-ratio formulation can connect trust region policy optimization and the Cross-Entropy Method (CEM). That connection is interesting because it suggests these are not isolated tricks, but related ways of controlling how aggressively a policy moves.

How the method works in plain English

The core idea behind Bounded Ratio Reinforcement Learning, or BRRL, is to formulate policy optimization as a regularized and constrained problem where the policy ratio is explicitly bounded. Instead of starting from PPO’s clip and trying to justify it afterward, the authors start from a principled optimization problem and derive an analytical optimal solution.

That analytic solution is important because the paper proves it guarantees monotonic performance improvement. In plain English: the idealized update is designed so that each step should not make the policy worse, at least under the paper’s assumptions. This is the kind of property RL researchers like because it turns a heuristic update rule into something with a more formal safety net.

Of course, real policies are parameterized, so you cannot usually use the exact analytic solution directly. To handle that, the authors introduce Bounded Policy Optimization, or BPO. BPO minimizes an advantage-weighted divergence between the current policy and the analytic optimal solution from BRRL. That makes BPO the practical algorithmic counterpart to the theory.

The paper also establishes a lower bound on the expected performance of the resulting policy in terms of the BPO loss function. That gives practitioners another way to think about optimization: reducing the loss is not just a training signal, it is tied to a bound on expected performance.

- BRRL: the theoretical framework with bounded policy ratios

- BPO: the practical optimization algorithm for parameterized policies

- GBPO: a group-relative extension for LLM fine-tuning

What the paper actually shows

On the theory side, the paper makes three main claims. First, it derives an analytical optimal solution for the bounded-ratio formulation. Second, it proves monotonic performance improvement for that solution. Third, it provides a lower bound on expected performance for the learned policy through the BPO loss.

On the interpretation side, the authors say BRRL offers a new theoretical lens for understanding why the PPO loss works as well as it does. That is a notable claim because PPO has long been one of those algorithms that people use because it is effective, even if the derivation feels less elegant than the results.

The paper also says BRRL connects trust region policy optimization and the Cross-Entropy Method. That is a useful framing for developers who move between classic RL and other optimization routines, because it suggests a shared structure around controlled policy movement rather than a grab bag of unrelated techniques.

For experiments, the paper reports evaluations of BPO across MuJoCo, Atari, and complex IsaacLab environments, including Humanoid locomotion. It also evaluates GBPO on LLM fine-tuning tasks. The abstract does not provide benchmark numbers, so there are no concrete scores to compare here. What it does say is that BPO and GBPO generally match or outperform PPO and GRPO in stability and final performance.

That wording matters. “Generally match or outperform” is not the same as claiming a clean sweep across every task, and the abstract does not give enough detail to know where the gains are largest, how consistent they are, or what the variance looks like. So the safe read is that the method looks promising across a mix of classic control, Atari, robotics, and language-model settings.

Why developers should care

If you are building RL systems, the most practical value here is not just a new acronym. It is the possibility of replacing a widely used heuristic with something that has a clearer optimization story and a stronger stability narrative. That can matter when you are trying to keep training runs from collapsing, especially in large-scale or expensive environments.

For LLM fine-tuning, the GBPO extension is the part to watch. The paper explicitly extends the framework to group-relative optimization, which suggests it is aiming at the same territory as methods like GRPO. If you are working on alignment or policy optimization for language models, a method that preserves the spirit of conservative updates while giving a tighter theoretical basis could be attractive.

There are still open questions, though. The abstract does not tell us how much extra complexity BPO adds, how sensitive it is to hyperparameters, or whether the analytic framing changes the cost of implementation. It also does not show benchmark tables, ablations, or failure cases, so it is hard to judge where the method is genuinely better versus just competitive.

That is the right way to read this paper: as a theory-driven attempt to make PPO-style optimization less ad hoc, backed by broad but still high-level empirical claims. If you care about stable policy updates and want a cleaner explanation for why bounded updates work, BRRL is worth tracking. If you need immediate production guidance, you will want the full paper’s experimental details before betting on it.

Bottom line

BRRL reframes PPO-style learning around an explicitly bounded policy ratio, then turns that idea into BPO for general RL and GBPO for LLM fine-tuning. The promise is a more principled route to stable updates, with theory that supports monotonic improvement and experiments that reportedly hold up across several domains.

What it does not yet give you, at least in the abstract, is the full implementation story or hard benchmark numbers. But for engineers who care about the gap between “works in practice” and “makes sense on paper,” this is exactly the kind of paper worth reading closely.

Related Articles

AVISE tests AI security with modular jailbreak evals

Apr 23

Parallel-SFT aims to make code RL transfer better

Apr 23

SpeechParaling-Bench tests speech models on nuance

Apr 23

Safe Continual RL for Changing Real-World Systems

Apr 22

Random Neural Nets Show Phase-Shifted Fluctuations

Apr 22

Why “edge of stability” can help generalization

Apr 22