Task boundaries can skew continual learning results

A new paper shows that how you split a stream into tasks can change continual learning results, even when the data, model, and budget stay fixed.

Streaming continual learning usually starts with a simple move: take one continuous data stream and chop it into tasks. This paper argues that step is not just housekeeping. It can change the evaluation regime itself, which means two valid splits of the same stream can lead to different benchmark conclusions. For engineers, that matters because it means results may depend as much on task boundaries as on the learning algorithm.

The paper is Temporal Taskification in Streaming Continual Learning: A Source of Evaluation Instability. Its core message is practical: if you are comparing continual learning methods on streaming data, you need to treat temporal taskification as an evaluation variable, not a fixed preprocessing detail.

What problem this paper is trying to fix

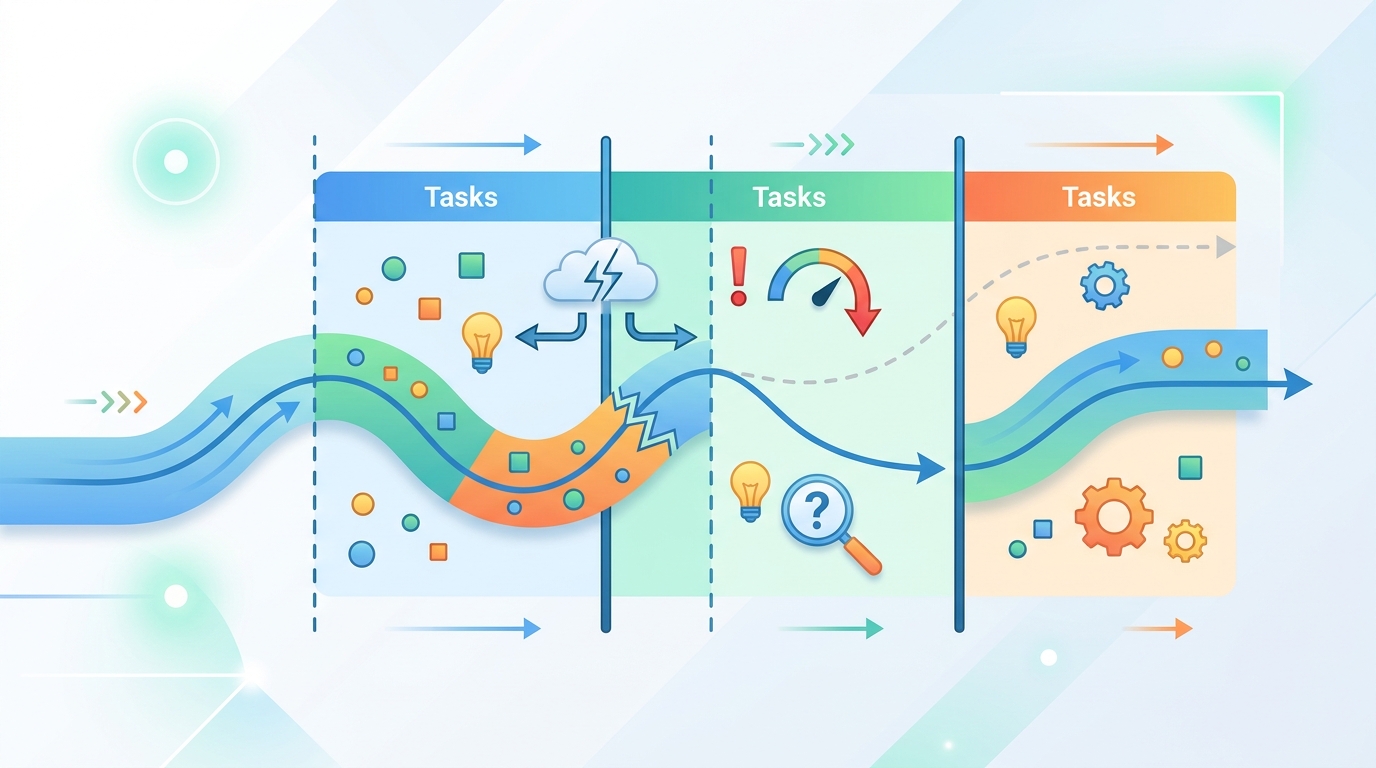

In streaming continual learning, a model sees data over time and is expected to adapt without losing earlier knowledge. But most benchmarks do not feed the model a raw stream end to end. Instead, they create discrete tasks by splitting time into chunks. That makes the problem easier to define and compare, but it also introduces a hidden choice: where exactly do those boundaries go?

The authors argue that this choice is not neutral. Different splits of the same stream can produce different continual learning regimes, which can then lead to different conclusions about which method works best. In other words, the benchmark may be unstable before the model even starts training.

This is especially relevant for developers who rely on continual learning evaluations to choose methods for production systems that face changing data over time. If task boundaries can shift the apparent performance of a method, then a single benchmark score may not be enough to judge robustness.

How the method works in plain English

To study the effect of task boundaries, the paper introduces a taskification-level framework. The goal is to measure the properties of the split itself, before any continual learning model is trained.

The framework is based on three ideas. First, it uses plasticity and stability profiles to describe how the taskification shapes the learning environment. Second, it defines a profile distance between taskifications, which captures how different two splits are at the structural level. Third, it introduces Boundary-Profile Sensitivity, or BPS, which measures how strongly small boundary changes alter the induced regime.

That last part is the most practical. BPS is meant to diagnose whether a taskification is fragile: if moving a boundary a little causes a large change in the profile, then the benchmark may be highly sensitive to arbitrary split choices.

Think of it this way: if the stream is the same but the cut points change, the benchmark may stop asking the same question. The paper’s framework tries to make that visible before you spend compute training models on top of it.

What the paper actually shows

The evaluation focuses on network traffic forecasting using CESNET-Timeseries24. The authors keep the stream, model, and training budget fixed, and vary only the temporal taskification. That design is important because it isolates the effect of boundary placement instead of mixing it with changes in data or optimization.

They test continual finetuning, Experience Replay, Elastic Weight Consolidation, and Learning without Forgetting. The paper compares 9-day, 30-day, and 44-day splits and reports substantial changes in forecasting error, forgetting, and backward transfer across those taskifications.

The abstract does not provide the exact benchmark numbers, so there are no published figures here to quote. What it does say clearly is that taskification alone materially affects continual learning evaluation.

The paper also finds that shorter taskifications produce noisier distribution-level patterns, larger structural distances, and higher BPS. In plain terms, smaller time chunks make the evaluation more sensitive to boundary perturbations. That suggests shorter taskifications may be more unstable as benchmark setups, at least in this dataset and setting.

Why developers should care

If you build systems that learn from streams, this paper is a reminder that benchmark design can shape your conclusions. A method that looks strong under one temporal split may look weaker under another, even when the underlying stream is unchanged. That matters when you are comparing replay-based methods, regularization-based methods, or plain continual finetuning.

For practitioners, the immediate takeaway is not to abandon task-based evaluation. It is to be more explicit about how tasks are formed and to test whether the results are stable across reasonable boundary choices. If your application depends on temporal segmentation, the segmentation itself may be part of the model selection problem.

- Keep the stream fixed when comparing methods.

- Vary task boundaries to test sensitivity, not just accuracy.

- Look at forgetting and backward transfer, not only forecasting error.

- Treat temporal taskification as part of the benchmark definition.

Limitations and open questions

The paper is focused on one domain: network traffic forecasting with CESNET-Timeseries24. That makes the results concrete, but it also means the findings may not transfer automatically to other continual learning settings, modalities, or datasets.

It also studies a specific set of methods: continual finetuning, Experience Replay, Elastic Weight Consolidation, and Learning without Forgetting. The abstract does not claim that every continual learning algorithm will respond the same way, and it does not provide a universal correction for the instability it identifies.

Another open question is how to standardize taskification in a way that is fair across datasets with different temporal structure. The paper makes a strong case that taskification should be treated as a first-class evaluation variable, but it does not claim to solve the broader benchmark-design problem.

Still, the message is clear and useful: if you evaluate streaming continual learning, the boundaries matter. The stream is not the whole benchmark. The way you slice it can change the story the benchmark tells.

Related Articles

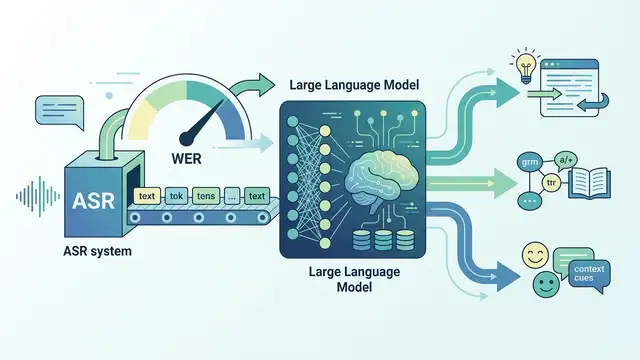

LLMs for ASR Evaluation: Beyond WER

Apr 24

Teaching Video Models to Understand Time

Apr 24

AVISE tests AI security with modular jailbreak evals

Apr 23

Parallel-SFT aims to make code RL transfer better

Apr 23

SpeechParaling-Bench tests speech models on nuance

Apr 23

Safe Continual RL for Changing Real-World Systems

Apr 22