Teaching Video Models to Understand Time

A self-supervised way to detect speed changes, estimate playback speed, and generate or sharpen videos at controlled timing.

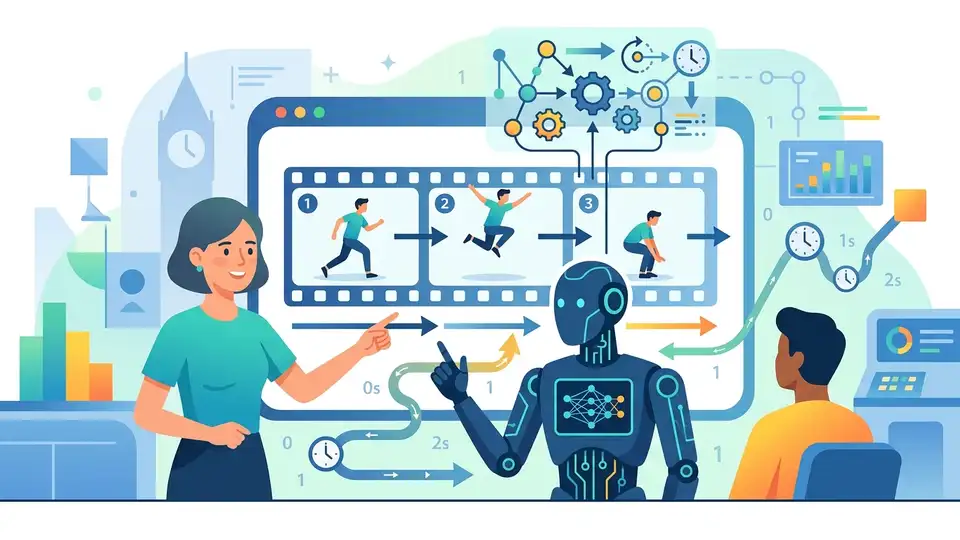

Video models usually focus on what is happening. This paper argues they also need to understand how fast it is happening. The authors of Seeing Fast and Slow: Learning the Flow of Time in Videos treat time as a learnable visual concept and build models that can detect speed changes, estimate playback speed, and generate or refine video at different temporal scales.

That matters because speed is not just a playback setting. In video understanding, a fast-forwarded clip and a slow-motion clip can change what cues are visible, what motions are detectable, and what downstream systems infer about events. For developers building video AI, the ability to reason about time opens up a new axis of control: not just frame content, but temporal behavior.

What problem this paper is trying to fix

The paper starts from a simple gap in modern computer vision: videos have been central to research, but the field has paid relatively little attention to perceiving and controlling the passage of time. In practical terms, most systems are trained to recognize objects, actions, or scenes, while treating frame rate and playback speed as background assumptions.

That assumption breaks down in real applications. A clip may be sped up, slowed down, compressed, or captured at a different temporal resolution than the model expects. If a system cannot tell whether time has been manipulated, it may misread motion patterns or miss details that only appear at certain speeds.

The paper frames this as both a perception problem and a generation problem. First, can a model tell whether a video has been sped up or slowed down? Second, can it generate or reconstruct video at different speeds in a controlled way? The authors argue that answering those questions requires learning time directly from video data, not bolting it on as an afterthought.

How the method works in plain English

The core idea is to use the natural structure already present in videos as supervision. Instead of requiring manual labels for speed changes, the authors exploit multimodal cues and temporal patterns in video to train models in a self-supervised manner. In other words, the model learns from the video itself by observing how motion and timing relate.

From that training signal, the system learns two basic skills: detecting when playback speed has changed, and estimating the speed of a clip. Those skills are then used as building blocks for more advanced temporal control tasks.

One important downstream use is dataset curation. The learned temporal reasoning models help the authors assemble what they describe as the largest slow-motion video dataset to date from noisy in-the-wild sources. The key point here is not that the dataset is perfectly clean, but that the model can sift usable slow-motion footage out of real-world data that was not originally curated for this purpose.

That dataset then supports two more model capabilities. The first is speed-conditioned video generation, where the model produces motion at a specified playback speed. The second is temporal super-resolution, which transforms low-FPS, blurry videos into higher-FPS sequences with finer temporal detail. The paper positions both as examples of temporal control rather than just spatial enhancement.

What the paper actually shows

The abstract does not provide benchmark numbers, accuracy scores, or ablation results, so there are no concrete metrics to report here. What it does show is a pipeline: learn temporal reasoning from unlabeled or weakly structured video, use that reasoning to curate a larger slow-motion dataset, and then train models for speed-aware generation and reconstruction.

That sequence is significant because it suggests time can be treated as a manipulable dimension in video learning. The authors are not only asking models to classify or summarize video; they are asking them to represent and control the flow of time itself.

The paper also makes a broader claim about capability. By learning temporal structure, models may support temporally controllable video generation, temporal forensics detection, and richer world models that understand how events unfold over time. Those are forward-looking implications, not fully demonstrated product features, but they show where the work is headed.

Why developers should care

If you build video tools, this paper points to a useful shift in design thinking. Temporal resolution is not just a data-preprocessing detail. It can be a first-class property of the model, just like resolution, style, or prompt conditioning.

That could matter in several workflows:

Video generation systems that need to respect a target speed or motion cadence.

Video enhancement pipelines that need to recover detail from low-FPS or blurry footage.

Forensics or moderation tools that need to detect whether footage has been sped up or slowed down.

Dataset builders that want to mine real-world video for slow-motion content without manual frame-by-frame labeling.

For engineers, the practical takeaway is that temporal reasoning can be learned from video structure itself. That reduces reliance on hand-labeled speed annotations and suggests a path toward more flexible video models that can adapt to different timing regimes.

Limitations and open questions

The abstract gives a strong direction, but it leaves several important questions open. We do not get benchmark numbers, so it is hard to compare the method against existing approaches or judge the size of the gains. The source also does not spell out how robust the learned speed detection is under challenging conditions such as heavy compression, extreme motion blur, or unusual camera motion.

Another open question is generalization. The paper says the models are trained in a self-supervised manner and used on noisy in-the-wild sources, but the abstract does not tell us how well the approach transfers across domains, video styles, or capture devices. That matters if you want to deploy this kind of temporal control outside curated research settings.

There is also a practical tension between “slow-motion footage” and “noisy in-the-wild sources.” The paper claims it can curate a large dataset from such sources, but the quality and consistency of those samples will determine how far the downstream models can go. In other words, the method sounds promising, but the real test is whether temporal control remains stable when the input data gets messy.

Still, the big idea is clear: video models do not have to treat time as a fixed assumption. They can learn it, estimate it, and manipulate it. For anyone building the next generation of video AI, that is a useful capability to keep on the radar.

In short, this paper pushes video understanding beyond “what is in the frame” toward “how the frame sequence behaves over time.” That is a meaningful expansion of the problem space, and it could become important anywhere video needs to be generated, repaired, or analyzed with temporal precision.

Related Articles

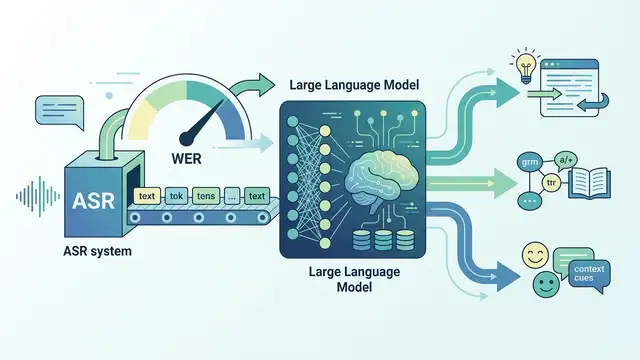

LLMs for ASR Evaluation: Beyond WER

Apr 24

Task boundaries can skew continual learning results

Apr 24

AVISE tests AI security with modular jailbreak evals

Apr 23

Parallel-SFT aims to make code RL transfer better

Apr 23

SpeechParaling-Bench tests speech models on nuance

Apr 23

Safe Continual RL for Changing Real-World Systems

Apr 22