Why OpenAI Must Stop Treating Violent Threats as a Threshold Test

OpenAI was wrong not to alert law enforcement when its systems flagged a shooter-linked ChatGPT account, and the company should adopt a lower, faster escalation standard for credible violence signals.

OpenAI should stop waiting for an “imminent and credible” threshold before alerting law enforcement when its systems surface violent intent.

The Tumbler Ridge case makes the flaw plain. According to CBS News, OpenAI had flagged the shooter’s ChatGPT account in 2025, banned it, and still decided not to notify police because the company judged it did not meet its referral standard. After the massacre, Altman wrote to the community that he was “deeply sorry” the account was not reported. That apology matters, but the policy failure matters more. The company already had a signal strong enough to trigger automated abuse detection and human review. In a world where AI systems can surface planning, fixation, and rehearsal at scale, a narrow legal threshold is not a safety policy. It is a delay mechanism.

First argument

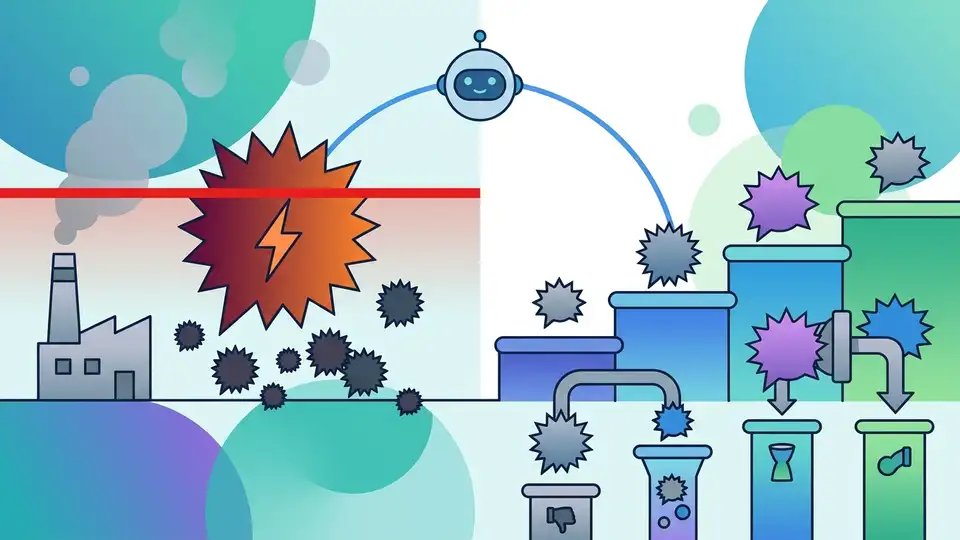

OpenAI’s current approach puts too much weight on certainty and too little on prevention. Once a model has flagged violent content and a human reviewer has confirmed enough concern to ban the account, the company is no longer dealing with ordinary misuse. It is dealing with a person whose behavior has crossed into a category that deserves outside scrutiny. Waiting for proof that harm is imminent assumes the company can reliably distinguish between idle fantasy and active preparation. It cannot. The point of a safety system is to intervene before the evidence becomes a body count.

The company’s own public posture shows the tension. In February, OpenAI said the account had been flagged by automated tools and human investigators, then banned for violating policy. That is not nothing. It is a concrete, internal finding that the account was dangerous enough to remove from the service. If a platform has enough confidence to cut off access, it has enough reason to alert authorities that a trained human should look at the same pattern. The failure here was not a lack of signal. It was a refusal to act on that signal outside the company walls.

Second argument

OpenAI’s process also creates an accountability gap that no AI company should want. When a platform holds the logs, the prompts, the risk scores, and the moderation notes, it becomes the only institution with a full view of the threat trajectory. That concentration of information makes the company a gatekeeper, not a neutral host. If it keeps the warning inside the building and later says the threshold was not met, the public is left to trust a private judgment that cannot be tested in real time. In matters of potential mass violence, that is not a defensible arrangement.

The Florida case makes the contrast sharper. CBS News reported that after learning of that incident, OpenAI identified a ChatGPT account believed to be associated with the suspect and proactively shared the information with law enforcement. So the company can move quickly when it chooses to. That means the issue is not technical incapacity. It is policy inconsistency. A safety system that reports one violent suspect and withholds another comparable signal invites the worst kind of criticism: not that it failed under pressure, but that it applied its own standards unevenly. For a company operating at global scale, unevenness is a liability.

The counter-argument

The strongest defense of OpenAI’s caution is that over-reporting can do real harm. If every alarming prompt becomes a police matter, users lose privacy, moderators drown in false positives, and law enforcement wastes time chasing noise. A system that escalates too aggressively can chill legitimate speech, especially from users discussing fiction, journalism, mental health, or political anger. There is also a serious legal and ethical concern about handing over user data without a high bar. On this view, OpenAI’s “imminent and credible risk” standard exists to prevent a slippery slope from safety monitoring into mass surveillance.

That concern is real, and it should not be dismissed. But it does not justify the failure in this case. A ban plus a human review is already a filtered, high-signal event, not a raw keyword hit. The answer is not to report everything. The answer is to define a narrower set of escalations for accounts that show repeated violent fixation, planning language, or evidence of operational thinking, then require rapid review by a trained safety team with a documented path to law enforcement. OpenAI does not need a dragnet. It needs a sharper trigger. The company’s own actions in Florida prove it can do that when it decides the risk is serious enough.

What to do with this

If you are an engineer, product leader, or founder building AI systems that can surface harm, stop treating crisis response as a legal afterthought. Build escalation into the product from day one, with explicit criteria for violent intent, a fast human review path, and a default toward reporting when an account shows repeated, credible indicators of real-world danger. If you are a PM or founder, do not hide behind “thresholds” that are so high they fail the first test that matters: whether the system helped prevent a foreseeable tragedy. Safety policy should be written for the worst day, not the average one.

Related Articles

Why Google’s $40 Billion Anthropic Bet Is the Right Move

Apr 26

Jensen Huang’s AI warning is really about coworkers

Apr 25

Why Jensen Huang Is Wrong About AGI Being Achieved

Apr 25

Why GPT Image 2 Matters More Than Another AI Image Launch

Apr 24

Anthropic and Amazon lock in 5GW for Claude

Apr 24

Why enterprises should stop treating Codex like a pilot project

Apr 24